Save to My DOJO

Containers are the natural evolution of virtualization and becoming increasingly adopted by MSPs. Are you on board yet?

I find that many MSPs do something of a balancing act when it comes to new tech. Some will air on the side of caution and wait 12 to 24 months for the industry to vet said technology, or if it addresses a pain point for one of their customers, they’re likely to jump on the band-wagon right away and implement the new product or feature early on. Whichever bucket you fall in here, there is one constant that remains the same. You will have to deal with new products and features as they emerge. The industry will demand it. Your Engineers will demand it, and your customers will demand it …eventually. The only variable here is time. The funny thing about time though is that it does matter for situations like this. Adopt a new technology too soon and you run the risk of wasting man-hours bringing it to market if that technology ultimately fails. Adopt it too late, and your competitors will beat you to it, resulting in lost business.

One such question mark that I’ve seen come up again and again for MSPs in the last 6 months is the proliferation of containerized applications. Docker has undoubtedly taken the industry by storm. While previously, Docker was relegated primarily to the open-source and developer space, it’s massive adoption on platforms such as Windows Server, Microsoft Azure, Amazon AWS, and more have brought it more into the realm of the IT Pro than some expected. IT Pros are now being asked to manage and deploy applications within containers and with this shift comes an opportunity for a budding MSP.

This post will serve as the first post of a multi-post series where we will look at containers from an MSP perspective. We’ll start today by defining what a container is, why you should be using them, and how your engineering team can get started with containers. If you want to skip ahead to later sections here are the other entries in this series:

Part 1 – What are Containers?

Part 2 – 7 Supported Platforms for Running Containers

Part 3 – Introduction to Docker

Part 4 – 4 Pro Tips for Working with Docker

Part 5 – How to Run Linux Containers on Windows Server 2019

Part 6 – Persistent Docker Containers

Part 7 – Docker Container Networking for MSPs – Part 1

Part 8 – How to Set Up a Linux Container Host

Part 9 – How to Run a Container in Azure with Azure Container Instance

Part 10 – Considerations for Pricing Container Services

Part 11 – Docker Container Networking for MSPs – Part 2

With that out of the way, let’s kick this series off by first talking about what a container is.

What is a Container?

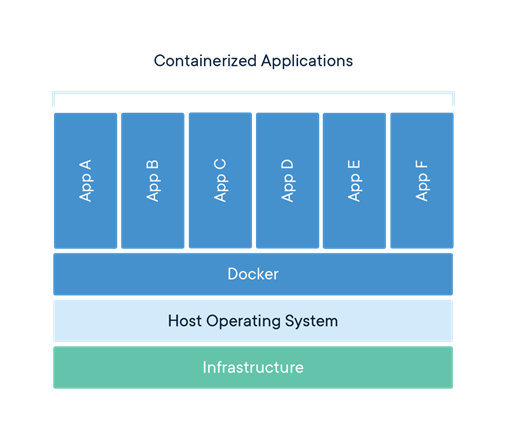

While the definition is ever-changing, put simply, a container is a workload or application that is segregated using what the industry calls kernel virtualization. As shown in the diagram below Kernel virtualization carves off a dedicated run-space for an application using a pre-defined, ultra-optimized container “image” that contains all the components needed for the workload or application to run. Outside of a shared kernel (Unless running Hyper-V Containers, which fully isolates the container), the application is entirely segmented from the container host itself and all other containers running on the machine. Once the application conducts its business the container is discarded.

*Image from https://blog.docker.com

To put your fears of data retention to rest, a container can be configured to connect to persistent storage should you have a static dataset that needs to be used for the containerized workload. There was a point in time where containerization followed the mantra of “treating your servers as cattle” in that they should be stateless, but as containers have started replacing traditional virtualized workloads, the need for static datasets has become more accepted and more easily attained.

Now that we’ve defined what a container is, let’s answer the question of why you would use it.

Why Would I Use a Container?

The way I always approach this question is with a little bit of history. Think back to how server computing used to work before virtualization, and how virtualization provided a much more efficient utilization of system resources. We went from the hardware being 10% to 15% utilized to 70% to 80% utilization with technologies like VMware and Hyper-V. There is no doubt that this shift was transformative for the industry. However, fast forward to today, where most workloads are now virtualized and ask yourself the question: “How many instances of Windows Server 2016 are running in your datacenter?” What if you could eliminate 80% of those instances? That’s a lot of potential computing power given back to focus on running applications and all just by getting rid of redundant instances of an OS. Put simply, I’m seeing containerization do to VMs what virtualization did to physical servers 10 to 15 years ago.

Resource efficiency aside, there are many other reasons to run containers in your customer environments.

Consistency: As each container utilizes a pre-built container image, you can expect a uniform consistent environment for your containerized application every time it runs. Again, the container image will contain an ultra-stripped-down instance of an OS and any supporting software for your container. Additionally, because of this architecture, your containerized app will run in the same way on any platform. So with that in mind, you can run it on Windows, Linux, vSphere, Azure, AWS, Google Cloud…and more!.

Ease of Deployment: While the initial “containerization” may take some footwork to get going, ongoing deployment operations will be quick. Not only can you quickly deploy and run a container manually, the process is easily scriptable. Having said that, as an example, you could use some of your existing MSP tools to script the deployment of a containerized monitoring or log-gathering tool to your customer base. No need to install the tool on-site. Simply build the container, then move it, and run it on-premises. When you’re done with it get rid of the container.

Safer Patch Cycles: Because of the nature of the “containerized state” everything needed to run the app or workload is within the container. The extra benefit here is that when a patch cycle comes around, you copy the container, upgrade the app or the underlying container image yourself, test and deploy. If at any point throughout that process you run into an issue, you still have the original, working container that you can simply drop back into place. No rollbacks needed here!

What are the Limitations When Using Containers?

While there aren’t many limitations, the ones there are can be big ones. As containers have historically been an open source thing, if you have an app that requires an older version of Windows Server, you’re out of luck. There are only container images available for Windows Server 2016 or newer. But if your app runs on Windows Server 2016, Windows Server 2019, or Linux, you’re all set!

The last big limitation is the fact that containers are intended to be headless, therefore if your application requires a GUI, that would be a non-starter as well.

What Are Some Examples of a Containerized Workload

As mentioned above, pretty much anything that can operate in a headless fashion is a great option for containerization. Some good examples of this would be IIS, Apache, and WebApps. MySQL and MSSQL are now certified to run in a container. Additionally, Microsoft is said to be working on getting all core Windows Server roles/features working within a container. Imagine someday being able to simply move an AD instance from a Windows Container host to a Linux Container Host, or from on-prem to the cloud in minutes. Lots of interesting possibilities open up

How Can My Engineering Team Get Started With Containers?

The next question at this point is usually how do I get started? First things first. This technology is enough of a mental shift that you really must learn some basics again. While containers may look and feel somewhat like virtual machines, they really are quite different. Your team should really first start looking at how to use docker on their local machines. From there docs.microsoft.com has some great documentation on getting started with containers on the Windows Server platform.

Another good resource for general information would be a follow-up blog post I wrote earlier in 2018. I Had the pleasure of sitting down with Ben Armstrong from the Hyper-V Product Group at Microsoft and we talked all about containers during an hour-long webinar. The full Q&A from that webinar can be found here.

Additionally, all the coming posts in this series will contain a ton of technical material for your engineer teams so be sure to stay tuned here as well!

Wrap-Up

One final question that I’d like to leave with you for today. As an MSP, have you looked at containers for your customer base? If so, what was the use-case? Have they worked well? I’d love to hear about your experiences with them in the MSP space, so be sure to let us know in the comments section below!

Thanks for reading!

Not a DOJO Member yet?

Join thousands of other IT pros and receive a weekly roundup email with the latest content & updates!