Save to My DOJO

For quite some time, we’d been praying for a vCenter Server high availability (HA) solution worthy of its name. Finally, the VMware god has obliged and granted us the gift of native HA. True HA is now embedded in vCenter Server Appliance. The only catch is that HA is only available for vCSA 6.5. No such thing for the Windows vCenter version.

A robust native HA solution was way long due more so as VMware keeps pushing the envelope with products such as vSAN and NSX which are highly dependent on a resilient vCenter Server infrastructure. Prior to vSphere 6.5, Microsoft Clustering or vSphere Fault Tolerance were two of the methods used to deploy vCenter in an HA fashion but the full spectrum of HA requirements was rarely met. For instance MS Clustering works great for vCenter for Windows, not so much for vCSA. VMware Fault Tolerance is an improvement but it does not protect against application failure. With vSphere 6.5, these kinks have been ironed out for good.

In part 1 of the series, I’ll demonstrate how easy it is to set up a vCenter HA cluster using the first of two deployment options. The second but shorter post, will cover the second deployment option which needs a little bit more elbow grease put in.

An overview of the Architecture

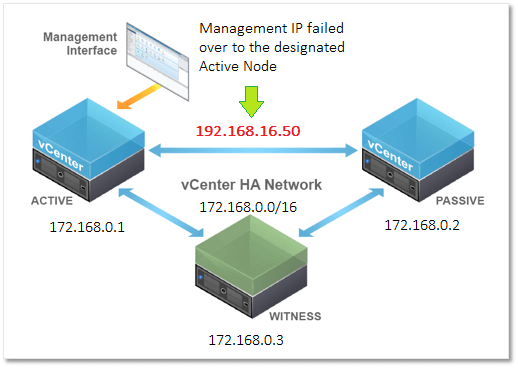

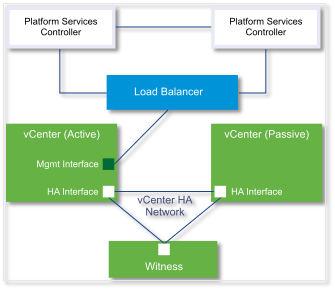

A vCenter High Availability cluster consists of three vCSA 6.5 appliances deployed in an Active, Passive and Witness configuration.

- The Active node is a standard vCenter instance, the state of which is regularly replicated to the Passive node.

- The Passive node takes over when the entire Active node or critical components fail.

- The Witness node is a lighter version of the appliance acting as a quorum. This a clustering technique that mitigates against split-brain or network partitioning events. This is when the Active and Passive nodes are both running but unable to talk to each other. One possible outcome is that you end up having two Active nodes which is bad to say the least.

To set up a vCenter HA cluster, we being by installing vCSA as we’d normally do. One of the requirements is to have a second network card connected to the HA or cluster private network. The inclusion of a second nic is taken care of automatically when selecting the Basic deployment option; see further down. The HA network is simply a portgroup created on ESXi.

VMware recommends placing each HA node on its own ESXi host. The hosts in turn, should be in a DRS cluster though the requirement is optional. In practice, this means you would need 3 ESXi hosts at a minimum. Also note that the HA portgroup must be created on every ESXi hosting an HA node.

After the Active node is configured, the Passive and the Witness nodes are cloned off of it. The cloning process can be either automated or done manually, depending on the deployment mode selected; Basic or Advanced.

In today’s post, I’ll be writing about the Basic option which does most of the donkey work for you.

Figure 1 illustrates a typical vCenter HA cluster architecture. The Active node has two allocated IP addresses, 192.168.16.50 which is the management address and 172.168.0.1 , the HA network address. Note that while the HA network can be routable, you should not add a gateway address when configuring the second network card on the vCSA.

Figure 1 – A typical vCenter HA cluster (vSphere 6.5 only)

In the event of an Active node failure, the Passive node takes over and becomes the Active node. The management IP address is carried over to the newly designated Active node.

The Requirements

There are a number of software and hardware requirements you must take core of, these being:

- vCSA 6.5. HA is only supported on this release.

- HA cluster nodes are only supported on ESXi 5.5 and/or vCenter Server 5.5 or later.

- Ideally, every HA node should reside on a different host and datastore. Optionally, the hosts should be in a DRS cluster. A minimum of 3 hosts is then required.

- The vCSA deployment size should be set to Small (4 vCPUs / 16GB RAM) or better.

- Create a port group on ESXi for the private HA network. Optionally, you can have a dedicated vSwitch if network isolation is a requirement.

- The HA private network must reside on a different subnet other than that used for management.

- You cannot mix IP4 and IP6 addressing when configuring networking on the nodes.

- Network latency on the HA network must be less than 10ms.

- No gateway for the HA network must be specified when configuring the nodes.

- You will need one IP address for management and three private IP addresses, one for each HA node.

- DNS A and PTR records for the Active node’s management network (i.e. FDQN for the vCSA).

Setting it up

The great thing about vCSA HA, as with many other features, is the ease with which it can be set up in a test lab. In fact, a single ESXi host will suffice but as always make sure to follow the product literature to the letter when deploying to a production environment.

At the moment I do not have a dedicated physical ESXi host for testing so, instead, I deployed a beefed up nested ESXi 6.5 host sporting 4 vCPUs, 32 GB of RAM and 500 GB of storage space. Anyway, after installing and configuring the nested ESXi host, the first thing I did was to create the HA portgroup.

Creating the HA Network on ESXi

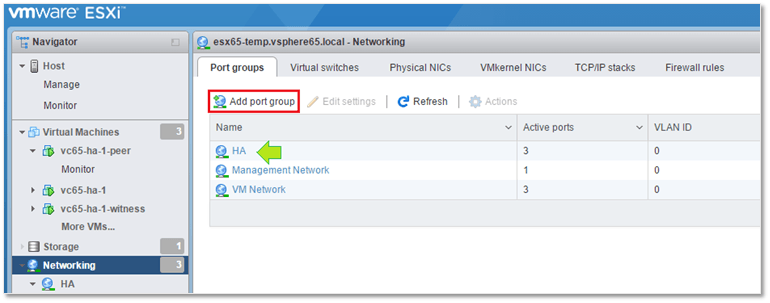

This is easily accomplished using the ESXi host management UI (https://<ESXi FQDN or IP Address>/ui). Log in as root and select Networking from Navigator as in Figure 2.

Figure 2 – Creating a port group on ESXi

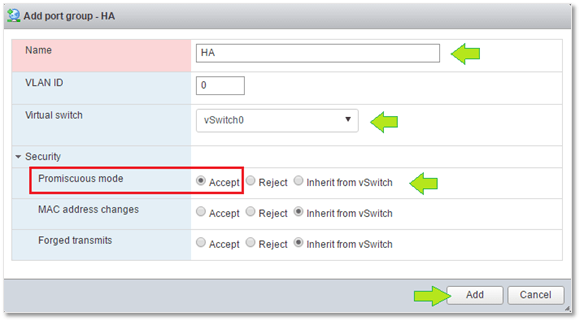

Click on Add port group and type in the port group name (HA in this case). Next, select the vSwitch where you want the port group created or just create a new one. Since we will be hosting the vCenter HA nodes on nested ESXi, we must enable Promiscuous mode on the vSwitch to allow inbound and outbound traffic to the rest of the world.

Figure 3 – Configuring the HA port group

Note: Promiscuous mode must only be enabled on nested environments such as the one I’m using. It is not an vCSA HA requirement.

Installing the Active Node

To install the first node, follow the procedure outlined in my post titled Installing vCSA 6.5 from scratch.

There’s one important step to carry out before moving on. I need to have the vCSA just installed manage the ESXi host where it resides. This setup is called a self-managed vCenter Server and is a requirement for configuring vCenter HA with the Basic option. Alternatively, the vCSA can be managed by vCenter Server as long as both share the same single sign-on domain.

If you omit this requirement, you won’t be able to set up HA using the Basic option. As I learned first hand, the installer simply throws an error at you telling you that it was unable to locate the vCSA in the inventory.

Creating the HA Cluster

The procedure to setup vCenter High Availability is dead simple.

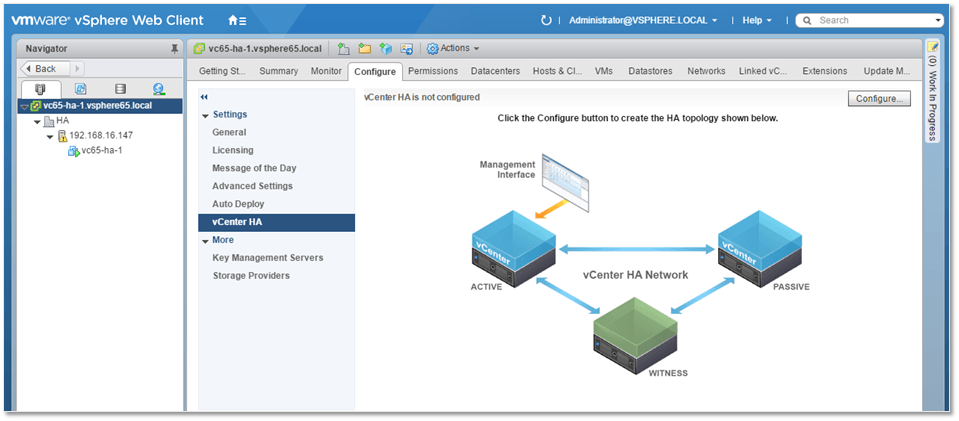

First, point your browser to https://<vcenter FQDN or IP address>/vsphere-client and log in as [email protected].

In my test setup, I created a DC called HA and placed the nested ESXi host under it. The vCSA can be seen listed in Figure 4 as vc65-ha-1.

Next are the actual steps that will create the HA cluster for us.

1 – Using the vSphere Web Client, highlight the vCenter Server name in Navigator and select the Configure tab. Under Settings, select vCenter HA and click on the Configure button.

Figure 4 – Deploying vCenter HA using the vSphere Web Client

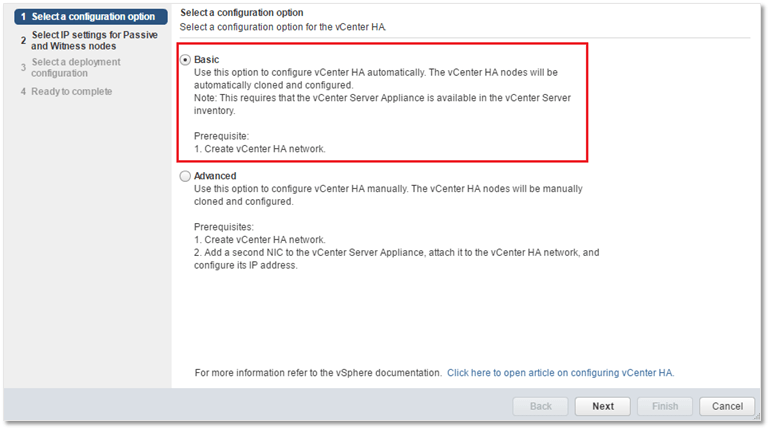

2 – Select the Basic option and press Next.

Figure 5 – Basic and Advance HA deployment options

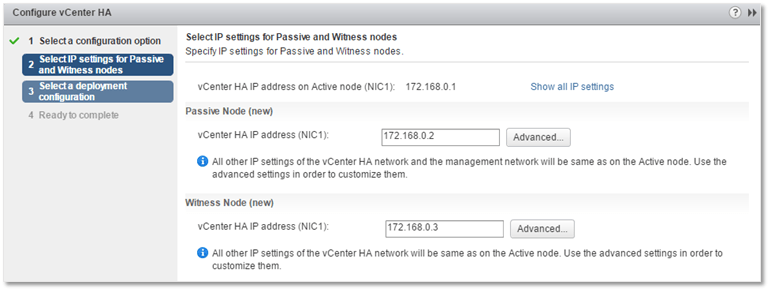

3 – Type in the private HA IP addresses for both the Passive and Witness node. Optionally, you can customize IP addressing for all nodes using the Advanced button. Press Next.

Figure 6 – Configuring HA IP addressing for the Passive and Witness nodes

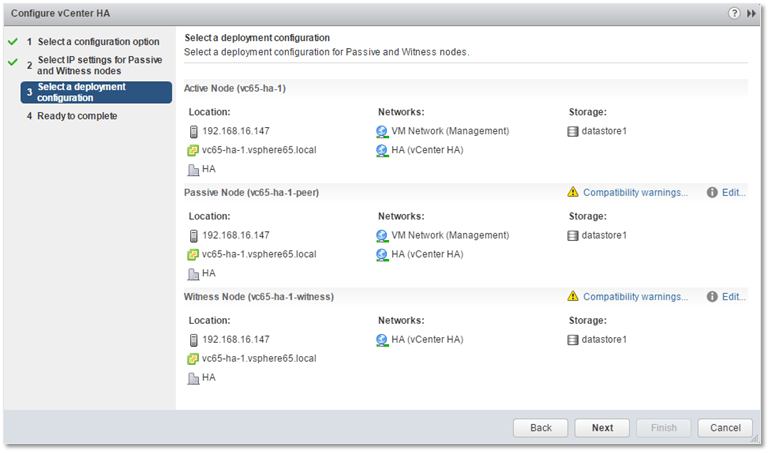

4 – Here you can review the nodes configuration and affect any changes if needed. Press Next.

Figure 7 – Revisiting node configuration

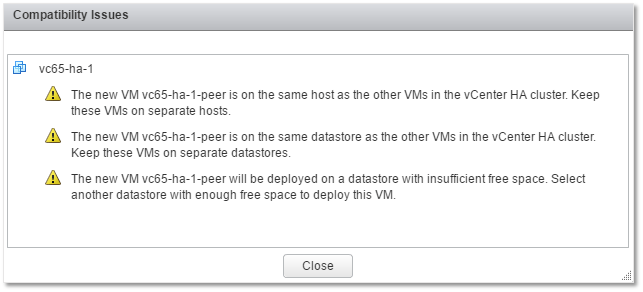

As you can see, I’m getting a load of warnings on account of deploying to a single host and datastore.

Figure 8 – vCenter HA cluster compatibility issues

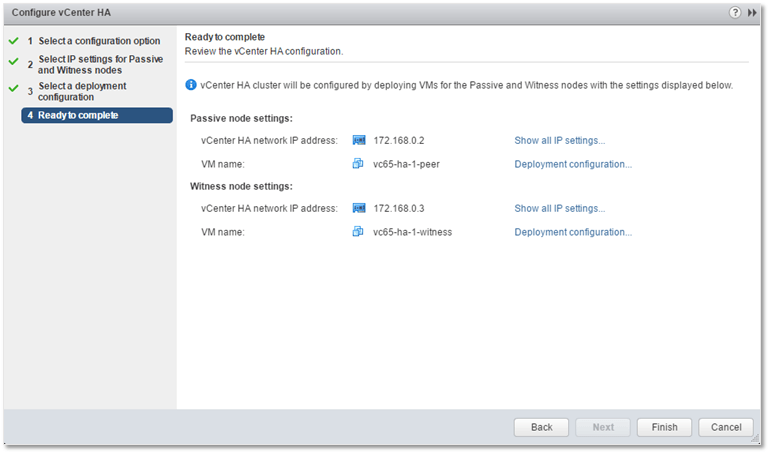

5 – Press Finish to kick off the HA cluster creation process.

Figure 9 – Kicking off the vCenter HA cluster creation process

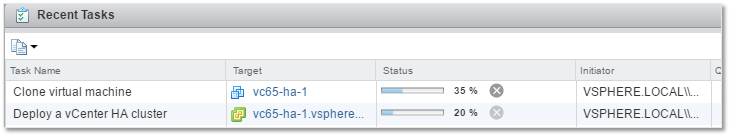

Figure 10 – Monitoring the deployment progress using the vSphere Web Client

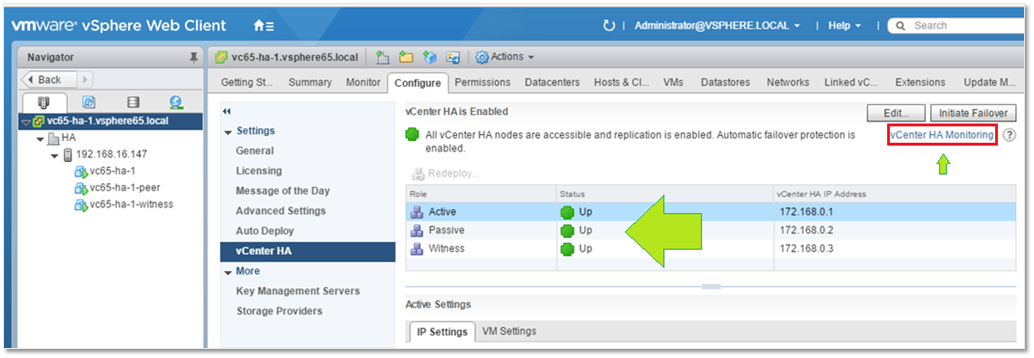

As already mentioned, the Basic option automates the cloning process for you. The first vCSA clone created is the Passive node immediately followed by the Witness node. As per Fig. 11a, the -peer and -witness suffixes are appended to the Passive and Witness node VM names respectively. If the cluster creation process completes successfully, you should hopefully get an Up status for all three nodes.

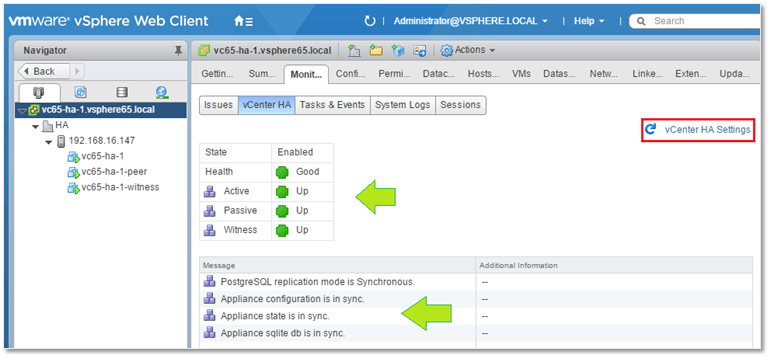

Clicking on the vCenter HA Monitoring link will give you further details about the health of the HA cluster (Fig. 11b).

Figure 11a – vCenter HA node heath monitoring

Figure 11b – vCenter HA node heath monitoring

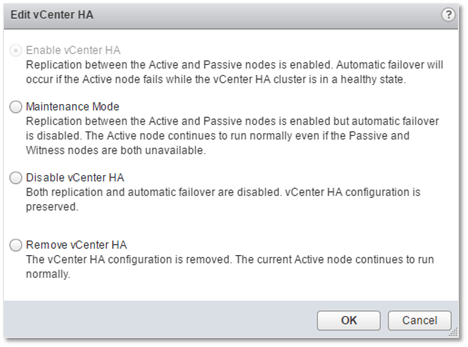

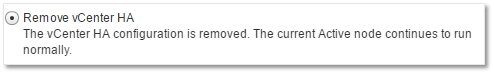

Of course, the whole point of having an HA cluster is to have the Active node failover automatically when trouble ensues. In fact this is the default behavior however you still have some level of control over the failover process. Clicking on the Edit button (Fig. 11a) allows you to do several things. Enable vCenter HA is the default setting as mentioned. Maintenance mode disables automatic failover however replication keeps on working. If you want to disable both failover and replication but keep the HA configuration intact, select the Disable vCenter HA option. And finally, if you’re having a bad day, go ahead and destroy the cluster altogether by selecting Remove vCenter HA.

Figure 12 – Configuring HA behavior and managing it

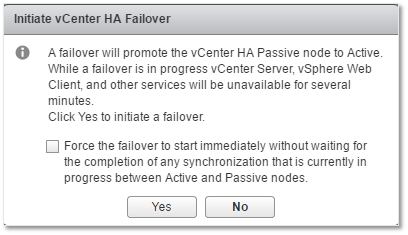

When automatic failover is disabled, a manual failover may be carried out via the Initiate Failover button (Fig. 11a). I tested this out in my lab and it took vCenter approximately 2-3 minutes to come back online, which I think is a tad bit slow. All things considered, I’m running everything on nested ESXi, not the ideal of scenarios but I’m still curious as to what the response times would be when using proper hardware. The documentation doesn’t really give hints as to what this should be.

Edit: The RTO is of 5 minutes as confirmed by VMware but will be improved on in the future.

In truth, the warning from Fig. 13 does say several minutes but I still think that service resumption time should be measured in seconds not minutes.

Figure 13 – Initiating a manual failover

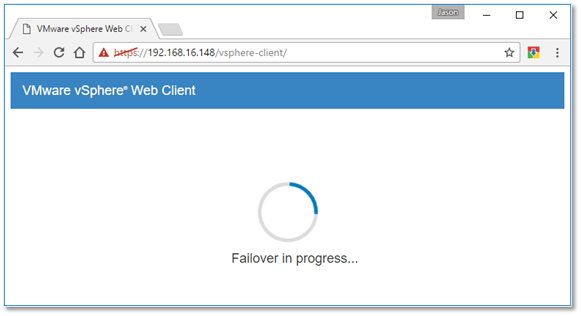

It did take a while before the passive node took over. The failover progress can be viewed in a browser if you try to access the vCSA using the web client.

Figure 14 – Failover in progress warning …

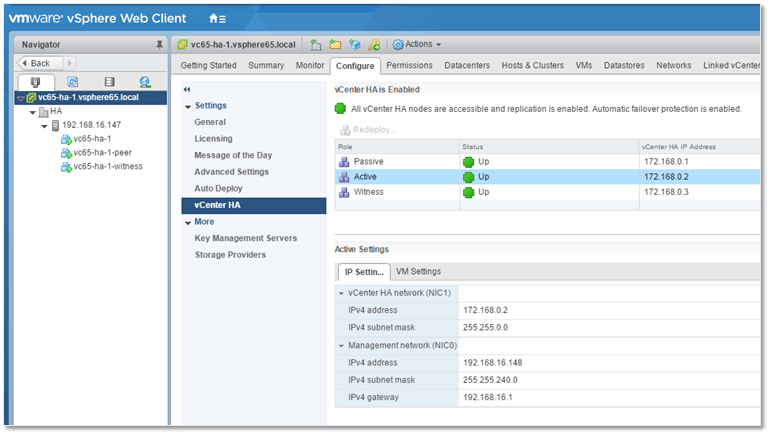

Looking at Figure 15, you’ll probably notice that what was previously the Passive node has now changed over to being the Active node. Notice also that the management IP – 192.168.16.148 – failed over as well but the HA address – 172.168.0.2 – remained the same which is how things are supposed to work.

Figure 15 – vCenter HA cluster status after failover

Troubleshooting and Additional Information

Here are a few tips, gotchas and links I came across while writing this article which you’ll probably find useful.

DRS

If you’re deploying the HA cluster to a DRS enabled vSphere cluster with less than 3 hosts, you will run into issues with the cloning process. The obvious solution is to use a minimum of three ESXi hosts and/or avoid deploying the HA cluster to a DRS cluster. Another option is described in this article which works around the issue by changing the config.vpxd.vcha.drsAntiAffinity vCenter advanced setting to false. Again, this is only applicable to testing scenarios. You can also go in and disable the affinity rule the installer creates after it fails the first time round.

Snapshots and DVD mounted ISOs

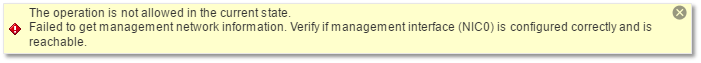

If you get the following error “The operation is not allowed in the current state. vCenter HA does not support an Active node having disks on more than one datastore“, make sure that the vCSA being cloned does not have any snapshots and that the VM’s DVD setting is set to Client Device.

Restored vCSA

Cloning a vCSA which has been restored from backup, may result in the error shown below. This is precisely what I ended up with when I cloned an existing vCSA which I had restored at some point or another . I haven’t found any workaround for this, so my advice would be to always start with a freshly installed vCSA when setting up an HA cluster.

Figure 16 – Error message presumably given on account of cloning a vCSA restored from backup

More troubleshooting tips can be found on the vSphere 6.5 documentation site.

External Platform Service Controller

If you are deploying an HA cluster with an external Platform Service Controller (PSC), you will need at least two PSC instances behind a load balancer. Further information is available here.

Figure 17 – Load balancing external PSCs

Backup and Restore operations

When taking backups, avoid backing up the Passive and Witness nodes. Just take a backup of the Active node. When restoring an Active node, you must remove all the cluster configuration, restore and re-create the HA cluster.

Figure 18 – Destroying an vCenter HA cluster

Conclusion

In this first part of the series, we’ve seen how the Basic HA deployment option allows you to easily and quickly deploy a 3-node vCenter HA cluster consisting of an Active, Passive and Witness vCSA 6.5 nodes. HA was a much needed addition in terms of complementing existing HA strategies given that, as time progresses, more and more VMware products and features have come to rely and expect 99.999% vCenter Server availability.

In part 2, we’ll see how the Advanced HA deployment option is similarly used to create a vCenter HA cluster.

[the_ad id=”4738″][the_ad id=”4796″]

Not a DOJO Member yet?

Join thousands of other IT pros and receive a weekly roundup email with the latest content & updates!

21 thoughts on "How to deploy a vCenter HA cluster – Part 1"

Jason, there are a number of inaccuracies with your post. Several others have been propagating (I’m sure not intentionally) the same things. Can you tell me where you got the information that you have to manually add the 2nd NIC? For the Basic workflow, we do that for you. You only need to manually add the 2nd NIC for the Advanced Workflow.

DRS is not required. If you do not have DRS you’ll have to manually pick the hosts on which to place the nodes.

A separate VSS is not required for the HA Network. I’ve never seen this recommendation, either. What value do you gain by doing this other than adding complexity?

The HA Network can be routable. I wouldn’t recommend it but it is entirely possible and there are some valid use cases for it. The thing to be aware of here, since you cannot have a second gateway for this interface, is that you need to add and maintain static routes on the nodes to allow them to communicate from separate L2 segments.

You talk about having to setup promiscuous mode on the VSS. Some readers may read that this is required for VCHA. However, you’re having to do this because of your nested ESXi host and has nothing to do with VCHA.

You correctly state a requirement for having a self-managed vCenter Server. However, if the vCenter Server is running in a management cluster, the Basic workflow is still available as long as the managing vCenter Server is in the same SSO Domain. This is an important use case.

The target RTO for VCHA is 5 minutes. We hope to decrease that in the future.

Thanks in advance if you can make corrections based on this information.

Hi Adam,

Thank you for your feedback first of all.

1) I stand corrected re. manually adding a 2nd nic to the VCSA when using the Basic option. I will have to re-test this for completeness sake though but yes, the documentation does state that the HA wizard creates it for you. My bad! Will fix.

2) I found it strange that DRS is recommended but I assumed this was to ensure that each HA node resides on a separate ESXi host using affinity rules. That said, it is listed under requirements on the doc site. Quote: ”

Using VMware DRS to protect the set of hosts is recommended. In that case, a minimum of three ESXi hosts is required”. I’ve re-listed DRS as optional.

3) A dedicated vswitch improves network isolation as per “This port group can be on an existing virtual switch or, for improved network isolation, you can create a new virtual switch. It must be on a different subnet than the management network on Eth0” from here. Personally, I would still go for a separate switch but that’s my way of doing things.

4) You’re right about the promiscuous mode bit. I actually had but removed a note which clarified that this is needed only when using a nested test setup. Will fix it!

5) RTO still a bit on the high side IMHO but it’s good that you’re working on improving it. I’m all for it 🙂

Thanks again for your feedback. Much appreciated.

Jason

Just remembered. Re. point 1, while testing HA for this post, I recall using a vCSA that had 2 nics already set up and I think that’s why I ended up listing it as a requirement. Also worth mentioning is that, while obviously redundant as you mentioned, you can still manually set up a 2nd nic and the Basic Option will readily pick it up, settings and all.

Yes and that really is a result of us not removing the 2nd NIC if you destroy the HA cluster. We leave it there so it is one less thin to re-do when re-enabling VCHA. And, as you’ll find out for Part 2, manually adding it is required for the Advanced setup 😉

Ah, I imagined that that was by design. Good thinking! Part 2 is ready but has yet to be published. Should be good but, by all means, let me know if something needs to be corrected.

Thanks gents. I learned a lot from this post.

Excellent Article Thank you

Thank you!

Great manual to setup a HA

Is it possible to also ad a vCenter in Linked mod in the same setup?

Have been struggling with the vmware support that say it’s possible.

Have set up a Platform Service Controller (PSC) with 2 vCenter in Enhanced Linked Mode but when coming to the HA setup it fails when the cloning part comes in to get the Passive and Witness node up.

Is it possible to also setup the Platform Service Controller (PSC) in HA?

Hi Stella

Thanks for your comment. I have not tried it myself but according to this KB, enhanced linked mode is not an HA supported topology. See the last two topologies under the supported topologies section. Re. PSC, the supported option is set up 2 PSCs and use a load balancer.

Hope this helps.

Jason

I am looking into doing this in our environment. I recently read tiny config is not supported by VCHA. This is not actually in a lot of articles which is probably why I missed it until recently. We have a TINY office in the US with only 2 hosts and 44 VMs. TINY is no more than 10 hosts and no more than 100 VMs so this is why I went for TINY. I read you sholuld not use TINY for vCHA because CPU and memory is not enough. However, surely we can increase this to match medium spec if tiny config is already built if it is indeed resources? Has anyone used TINY in production for vCHA? I need to do some more testing in my lab.

https://docs.vmware.com/en/VMware-vSphere/6.5/com.vmware.vsphere.avail.doc/GUID-8FD87389-8CC9-4298-8B08-A1526FB44524.html

Here is a better link I discovered

https://www.vmware.com/content/dam/digitalmarketing/vmware/en/pdf/techpaper/vcha65-perf.pdf

“VCHA supports all the configurations listed in Table 1 in the section “Test Environment.” vCenter Server

supports another configuration “Tiny” up to 10 hosts and 100 virtual machines, which is not supported by VCHA.

The tiny configuration deploys 2 vCPUs and 10GB of RAM, which is not enough for VCHA to provide RTO

guarantees. VCHA should be deployed on the vCenter Server appliance with a deployment size of small or

higher, not tiny.”

I did log a ticket with VMWARE. They were not that helpful.

“Deployment Size Small or bigger (4 CPU – 16 GB RAM)

https://docs.vmware.com/en/VMware-vSphere/6.5/com.vmware.vsphere.avail.doc/GUID-8FD87389-8CC9-4298-8B08-A1526FB44524.html

It may work successfully if you do increase the CPU and memory, however, if any issues were to subsequently arise, I would be uncertain of the level of support which could be provided.

“

I don’t see how increasing resources can have an adverse affect. I would test it out in a lab but it’s best to stick with VMware’s support advice especially if you need the latter.

Nice guide Jason and good advice to put the node and datastore on separate servers. It would be good if VMWare was smart enough to know a user was shooting themselves in the foot.

Cheers

Thanks for the feedback. True, sometimes documentation is a bit lacking on their part.