Save to My DOJO

Table of contents

In the part 1 of the series, I covered how to deploy a vCenter Server high availability cluster using Basic configuration, this being one of 2 deployment methods. The Advanced configuration option I’ll be covering today, gives you more flexibility and control over your deployment. It is also your only option in those instances where the Basic option cannot be used. The two cases I came across that enforce this limitation is when a self-managed vCenter Server cannot be deployed and the vCenter Server managing vCSA is on a different single sign-on (SSO) domain.

Deploying the Cluster

The procedure is similar to the one described in the first post, the only differences being the requirement to manually add a second network card to the vCSA and cloning the appliance twice. The confusing bit is when you must pause the configuration wizard midway to create clones for Passive and Witness nodes.

The steps are as follows:

1. Create an HA portgroup on every ESXi host(s) that will host a vCenter HA node.

2. Create A and PTR DNS records for the Active node. This is the first vCSA installed. Also, set aside 3 private HA IP addresses for the nodes (Ex. 172.168.1.1-3/24)

3. Deploy the first vCSA.

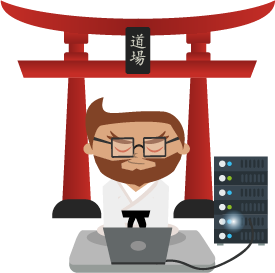

4. From the vSphere Web Client, add a second network adapter to the vSCA by editing the VM’s settings and hook it to the HA portgroup. Using VAMI, configure the IP settings for the second network adapter and then verify that the status for both nics is UP as shown in Figure 1.

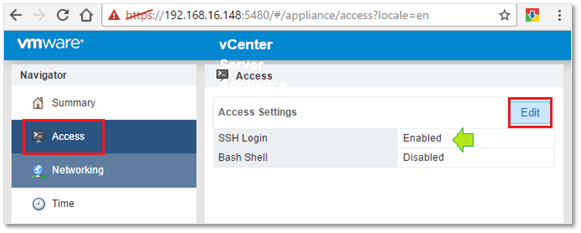

5. While still in VAMI, go ahead and enable SSH.

Figure 2 – Enabling SSH on the first vCSA installed

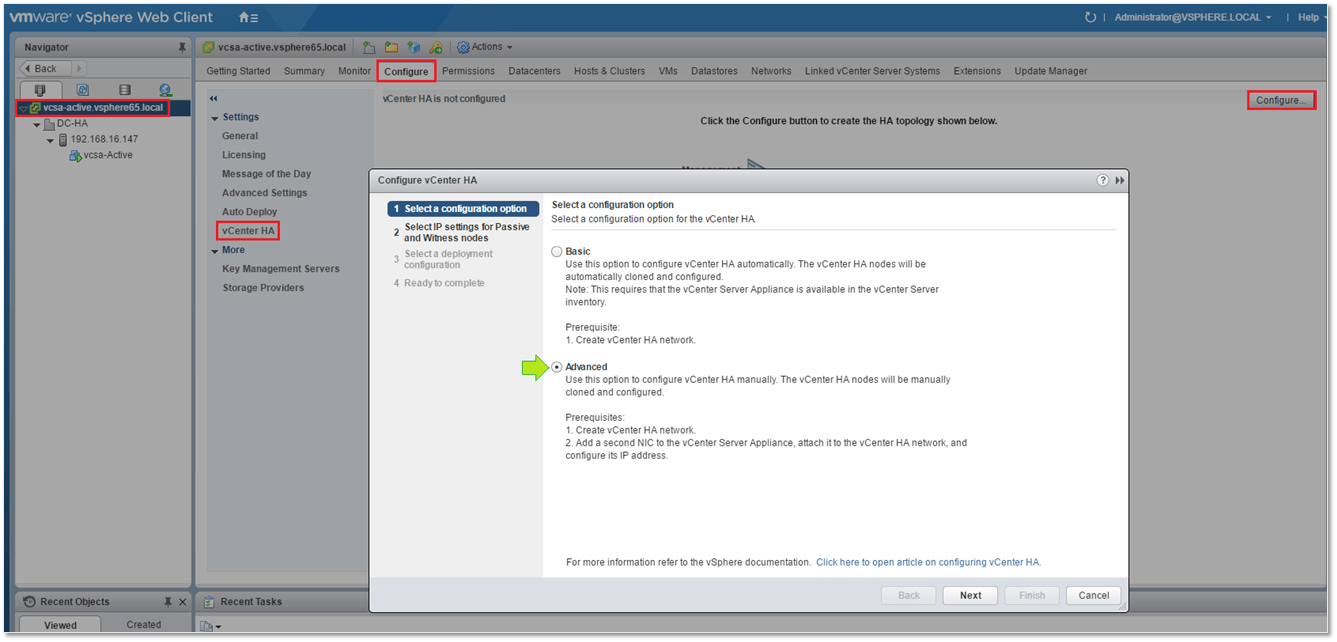

6. Launch the vCenter HA configuration wizard (see Fig. 3) using the vSphere Web Client.

Note: So far there is very little that wasn’t covered in part 1 of this series, so if you’re having difficulties do go back to it for reference.

7. Choose the Advanced configuration option as shown in Fig. 3. Press Next.

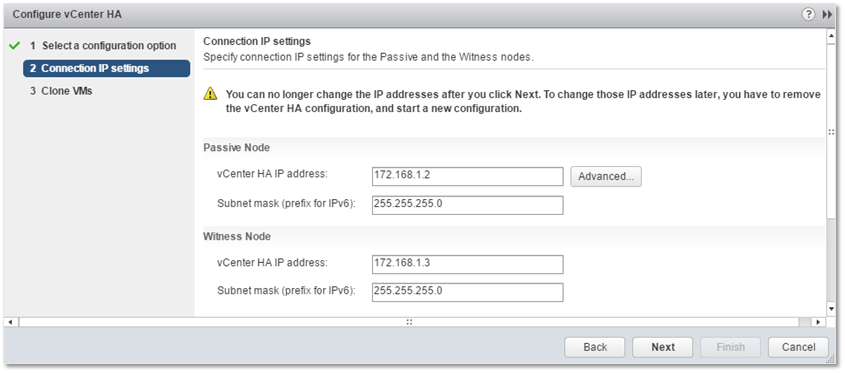

7. Type in the private IP address and subnet mask for both the Passive and Witness nodes. Clicking on the Advanced button gives you the option to override the management IP address should you need to specify an alternative one. Press Next.

Figure 4 – Configuring the HA IP settings on the Passive and Witness nodes

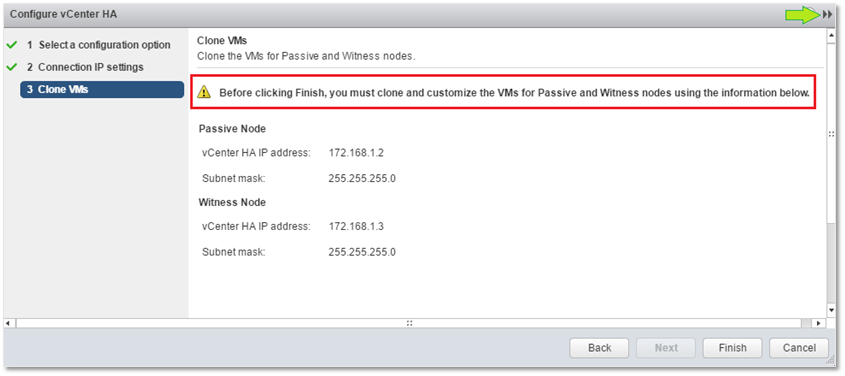

8. This next screen is where you manually clone the Passive and Witness nodes using the Active node as a template. Take note of the IP addresses previously entered as you’ll be needing them further on. Do not press Finish or anything else for that matter. Instead, click on the double-arrow icon at the top-right corner to minimize the current task to the Work In Progress panel. Doing this allows you to carry on with the cloning process without having to open another browser session.

Figure 5 – Time to clone!

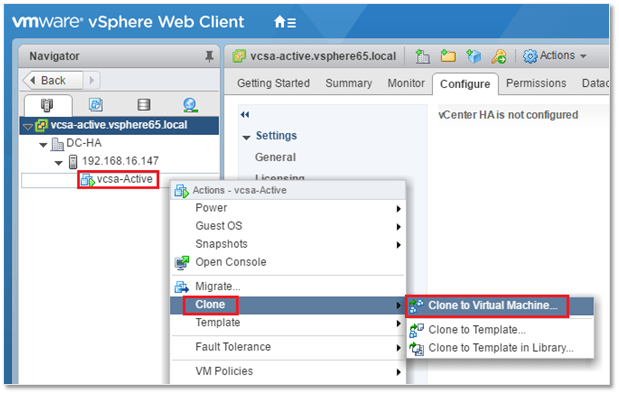

9. Right-click on the Active node and select Clone to Virtual Machine.

Figure 6 – Cloning the Passive and Witness node off the Active node

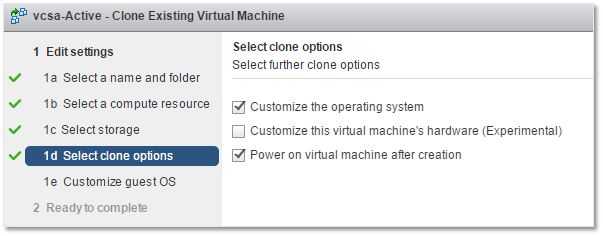

10. This part involves quite a good number of steps, so I’ve opted to put together two videos instead of taking a screenshot of each. Essentially, you will clone the Active node twice and customize each node accordingly. The complete list of steps is available here. It’s all captured in the videos below.

Figure 7 – Selecting to customize the OS during cloning and powering it on when done

Creating the Passive node

The important bit is as follows. During the OS customization step, make sure to type in the Active node’s DNS name. Also, make sure that everything is in lower case. Remember that during a node failover, the Passive node ends up being the Active node. This means that the Active node’s original hostname and management IP address must persist for the cluster to function properly. The DNS domain must obviously match that created in DNS.

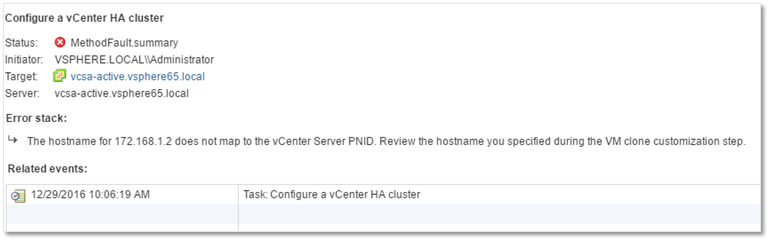

In the first video, if you watch closely, you can see that I mistakenly typed in vcsa-passive for the hostname. This is incorrect, since the hostname assigned must be that of the Active node, i.e. vcsa-active. When a hostname mismatch is detected, the configuration wizard will throw a MethodFault.summary error during the finalization process.

Figure 8 – Name mapping error due to an incorrect Passive node name

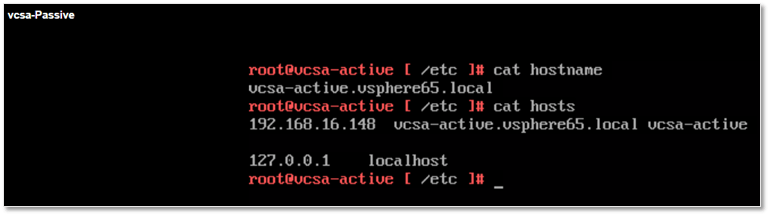

The issue can be rectified by changing the name of the appliance by editing /etc/hosts and /etc/hostname using vi. I don’t think this is a VMware supported fix but it worked for me just fine. It’s better than having to start all over again. However, if this were a production environment, I would definitely play it safe and redo it from scratch.

Figure 9 – Node name can be changed from shell if required

When configuring the network interfaces, remember to assign nic0 the same management IP address used for the Active node. For the second nic, specify the HA IP address you typed in during the HA configuration (see Figure 3 above).

Creating the Witness node

The same procedure applies to creating the Witness node. The only difference is that you do not configure the first nic. Just set it up to use DHCP settings. In the video, I chose to edit the previously created Guest OS customization spec for the Passive node just to speed up things a little bit. In actual fact, the customization specs can be prepared in advance by selecting the Customization Specification Manager from the Home screen in vSphere Web Client.

Finalizing the Advanced HA configuration

Once you’re done cloning, click on the pending task in the Work In Progress panel. This returns focus to the HA configuration wizard window. Click on Finish to finalize the setup. Assuming all the correct configuration information has been supplied, the vCenter HA cluster will be fully online after a few minutes and having completed the initial replication task.

Things not always go according to plan, a typical example being where I typed in the wrong DNS name for the Passive node. The troubleshooting section on the VMware documentation site is not exhaustive so you won’t always be able to find a solution to a problem but more often than not, problems arise either because one fails to take care of the requirements or because the instructions weren’t followed to the letter.

Conclusion

This concludes this 2-part series where I’ve discussed how to set up a vCenter HA cluster using vCSA 6.5 using the Basic and Advanced configuration options. From first impressions, this seems to be a very viable HA solution as it is robust and very easy to set up. Above all, it does not require any 3rd party additions. Having said that, I have not really put vCenter HA to the test in a production setting, so perhaps there might be issues or limitations I might have unintentionally overlooked or which aren’t reproducible in a lab environment.

All said and done, I believe that vCenter High Availability is yet another compelling reason to move to vCSA 6.5.

[the_ad id=”4738″][the_ad id=”4796″]

Not a DOJO Member yet?

Join thousands of other IT pros and receive a weekly roundup email with the latest content & updates!

11 thoughts on "How to deploy a vCenter HA cluster – Part 2"

Nice write up Jason , got me out of talking to VMWare support.

Have you had any other issues with HA – ideally services not starting ?

Thanks Philip. Tbh, I’ve used HA in a test lab. I did come across a few teething problems but don’t recall any related to non-starting services.

Very useful article. One of the most handy out there and finally allowed me to bring it over the line.

VMware’s docs were rubbish.

Hi simon

I’m now a vcp so pretty well versed in it now .

After doing some investigation and checking VMware logs, found that the services were timing out, just had to set the timeout for longer and all worked great after that.

Hi Jason, just a quick Doubt, I guess both nodes (active an peer) has the same IP addresses in the production VLAN network , right?

Thanks, and by the way great post, indeed!

Only the active node is active on the production VLAN.

The witness and passive nodes are behind the scene on the VCHA network only.

When the active node goes down, the passive node take over on the production VLAN with the same IP.