Save to My DOJO

Let’s start by defining what is an EDM disk. It’s short for Raw Device Mappings, a mechanism by which virtual machines are allowed direct access to SAN/NAS storage. In practice, this means that a virtual machine’s ESXi RDM disk maps directly to a chunk of storage, a LUN perhaps, on some networked storage. Traditionally, a VM disk or VMDK consists of storage space carved out of the underlying datastore, i.e. the same place where the rest of the VM’s files reside, as per the default setting that is.

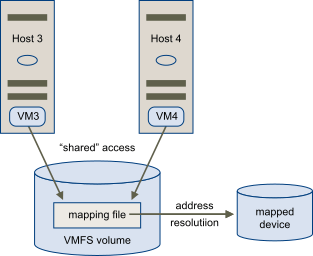

So what’s special about VMware RDM disks? Well, they tend to come in handy in a few specific scenarios. One common use is to grant machines concurrent access to the same resources. A cluster’s data or quorum disks come to mind where virtual machines have been set up as Microsoft Failover Cluster Services (MSCS) nodes, more so when each node resides on a different ESXi host. This latter scenario is referred to as a Cluster Across Boxes. Further details and guidelines on how to set up a Microsoft Cluster on VMware can be found here and here.

There are other benefits to using RDM disk among which dynamic name resolution, file permissions, snapshots, vMotion, and a few others that are listed here. That said, RDM disks should be used sparingly and only for specific scenarios. More often than not, one should stick to using traditional VMDK on VMFS since there’s no performance gain to be made with RDM. If anything, things might run a little bit slower. One should also keep in mind that a LUN used for this purpose can only map to one RDM disk, so again, use sparingly if SAN storage space is at a premium.

Disclaimers apart, let’s have a look at how one can create a VM with a VMware RDM disk.

Creating the LUN

We first need to make the LUN, the one the ESXi RDM disk will map to, is visible by ESXi. But before we do, let’s go ahead and create an iSCSI target. For this post, I’ll be using my home NAS.

The process of creating iSCSI targets, LUNs, etc. holds true irrespective of the storage solution in use. At its most basic, you first need to enable the iSCSI service, create an iSCSI target and map a LUN (chunk of storage space) to it. After that, you can then decide whether you want to secure the LUN by setting initiators (which machines have access to the LUN), CHAP authentication, and so on.

Here’s a 10GB LUN created on my Seagate NAS even though it shows up as being 11GB in size!

Creating the LUN to ESXi

The next step is to configure the iSCSI Software Adapter on ESXi. I’m going to illustrate this process using a freshly installed stand-alone ESXi host, one that is not managed by vCenter Server. To do this, launch the host client from the https://<ESX IP address> URL and run the following steps.

Step 1: Log on as root, and navigate to Storage -> Adapters. Click on Configure iSCSI as shown next.

Step 2: Assuming it is disabled, enable the iSCSI Software Adapter by selecting Enabled (1). Click Add Static Target (2) and type in the Target name and IP Address (3). This information should be available from your storage solution’s user interface or by running the appropriate commands from the console. Hit Save Configuration (4) to exit setting up the adapter.

Step 3: To verify that the LUN is visible to the host, change over to the Devices tab (1) and look for a disk matching the capacity of the one created on your storage solution (2). Actually, do this before you add the LUN, so you can then easily compare the before and after device lists. If the LUN is not listed, hit the Rescan button (3) and recheck the details entered in the static targets section.

Important: Make sure that the device is not used to create a new datastore or perhaps extend an existing one.

Adding an RDM to a VM

As an example, I’ll be adding a VMware RDM disk to a Windows Server VM using the LUN previously created. To create the RDM disk, you can use either ESXi’s host client (or the vSphere Web Client if the host is vCenter Managed) or vmkfstools from the command line. In this example, we are using the vSphere client but you can also add RDM disk using PowerShell with PowerCLI.

Step 1: To add the disk to the VM, right-click on the VM and select Edit Settings.

Step 2: From the Virtual Hardware tab (1), click on New raw disk and select the Add new RDM disk option (2).

Step 3: The non-allocated LUN (or device) should be listed next. Highlight it and press the Select bottom as shown.

Step 4: On the Edit Settings screen, navigate to the New Hard Disk section (1) and expand it. Set the Disk Compatibility mode to either Physical or Virtual (2).Press Save (3) to complete the RDM disk addition process.

To quote VMware, the difference between these 2 modes is as follows:

-

- RDM in virtual compatibility mode, LUN behaves as if it were a virtual disk. The RDM can use snapshots.

-

- In the physical compatibility mode, the RDM disk offers direct access to the SCSI device for those applications that require lower-level control.

Step 5: Finally, ensure that the guest OS can access the VMware RDM disk. In the next screenshot, you can see in Disk Management that Windows has correctly detected the 10GB disk. All that’s left now, is to bring the disk online, create and format the volume unless of course, you wish to leave the disk raw.

So, what’s inside an RDM file?

For completeness’ sake, I’ll briefly cover what exactly comprises an RDM disk in terms of VM files.

As can be seen in the next screenshot, I’m inspecting a VMware RDM disk file using the more command from ESXi’s shell. Prior to this, I took a note of the RDM disk’s filename from the VM’s settings. Looking at the directory listing in putty, there are at least 2 files associated with the RDM disk. Just like you’ll find with conventional VMDK disks, VMware RDM disks have a descriptor, the output of which can be seen below.

Under the Extent Description section, you’ll find a reference to a mapping or pointer file which is listed as <VM NAME>-rdm.vmdk. This latter file is what maps to the LUN we previously mounted, as a device, in ESXi.

It’s good to know how this information is used as it allows you to recreate an RDM disk if the virtual disk is either corrupted or not a supported format RDM. The whole process is detailed in this KB article.

To protect your VMware environment, Altaro offers the ultimate VMware backup service to secure backup quickly and replicate your virtual machines. We work hard perpetually to give our customers confidence in their backup strategy.

Plus, you can visit our VMware blog to keep up with the latest articles and news on VMware.

Conclusion

RDM disks should be used sparingly. Even then, use them to cater for specific cases such as clusters and to offload I/O workloads, such as SAN snapshots. You’ll find that an RDM disk is, in fact, a VMDK file only that in this case, it contains metadata (mappings) on how to reach a specific LUN. As we’ve seen, the benefits are many but traditional VMFS datastores are always preferred when creating VM disks. There are also a number of limitations to RDM you should be aware of. These are listed here.

If you’d like to learn more about VMware storage, do have a look at our 2-part series titled Of Storage, Protocols, and Datastores. If there’s anything in this post you’re unsure about don’t hesitate to write to me using the comments below and I’ll get back to you as soon as possible.

Not a DOJO Member yet?

Join thousands of other IT pros and receive a weekly roundup email with the latest content & updates!

4 thoughts on "How to create vSphere VMs with RDM disks"

Hey Jason,

Thank you so much for this awesome blog.

I have one question here. Is it possible to install OS in RDMs?

Hi. You can install the OS on an RDM, although what would be the use case for it?

It is recommended to stick with standard virtual disks for the OS.