Save to My DOJO

Table of contents

My all-time favorite VMware feature is definitely vMotion. For the uninitiated, vMotion allows you to move powered on virtual machines across ESXi hosts without incurring any downtime. For this reason, vMotion is also referred to as hot or live migration. On the other hand, cold migration is used to migrate powered off or suspended VMs whenever vMotion is not available.

With vMotion, you benefit from being able to carry out maintenance tasks on ESXi hosts and storage backends without disrupting service. Simply put, VMs keeps on running without missing a beat while migrating from source to destination.

Since its inception, vMotion keeps getting better and better with every release of vSphere. With vSphere 6.5, vMotion traffic can be encrypted to secure VMs as they transit across sites over potentially non-secure links.

To enable vMotion, ESXi hosts must be managed by vCenter and appropriately licensed. The good news is that all versions of vSphere, save for the Essentials Kits, include vMotion as a standard feature. If in doubt, refer to this whitepaper for further details. Shared storage is something else you must take into account as it a requirement in some instances.

This post deals with vMotion as implemented in vSphere 6.5, the latest release at the time of writing.

vMotion Migration Types

There are three main types or modes of vMotion as listed next. I’ve included some of the inherent benefits as well as a clip showcasing each type. In all three videos, I’ve left a ping running in the background on the VM being migrated to show how network connectivity is maintained all throughout the migration.

1 – vMotion

This vMotion migration type, allows you to move or migrate a virtual machine from one compute resource or ESXi host to another without incurring any downtime on the VM. For this to work, the VM files must reside on shared storage.

Benefits:

The main benefit, as mentioned, is one where you can move VMs to secondary hosts without needing to schedule downtime for maintenance. Additionally, features such as DRS leverage vMotion to better distribute load across hosts.

Example:

In the following video, I’m simply migrating a VM running Centos from one ESXi host to another.

2 – Storage vMotion (svMotion)

With this type of migration, VMs can be migrated across different types of datastores on the same host without incurring any downtime. The ESXi host where the VM resides must have access to both source and target datastores.

Benefits:

- If required, the disk provisioning type for an existing VM can be changed during a migrate task.

- Where applicable, the VM’s files on the destination datastore are changed to match the inventory name of the virtual machine. The migration renames all virtual disk, configuration, snapshot, and .nvram files.

- VMs can be moved off a storage device to allow maintenance or reconfiguration of the storage device without any downtime.

- Manually redistribute VMs or virtual disks to different storage volumes to balance capacity or improve performance.

Example:

In this next video, I’m migrating the same Centos VM across SAN datastores via iSCSI. The VM will however still reside on the same ESXi host.

3 – Enhanced vMotion

The third type combines vMotion and svMotion into one single operation. This allows you to migrate VMs across hosts and disparate datastores at the same time without the need for shared storage.

Benefits:

Allows you to migrate VMs across vCenter Server instances, data centers, and subnets.

Example:

In this last video, the Centos VM is migrated from a SAN datastore mounted on ESXi host esx-c

vMotion on steroids

From vSphere 6.0 onward, vMotion supports the following migration types on top of the 3 outlined so far.

- Migrate to another virtual switch – Moving the network of a VM to a virtual switch of a different type. You can migrate VMs without the need to reconfigure the physical and virtual network. Supported scenarios include vSS to vSS, vSS to vDS and vDS to vDS. Migration from a vDS to vSS is not supported due to how vDS metadata is stored.

- Migrate to another data center – While moving VMs between data centers, you can change the data center a VM resides in. For networking in the target data center, you can select a dedicated port group on a distributed switch.

- Migrate to another vCenter Server system – Moving VMs between two vCenter Server instances that are connected in Enhanced Linked Mode. You can also migrate VMs between vCenter Server instances that are located across a long distance from each other.

Setting up vMotion

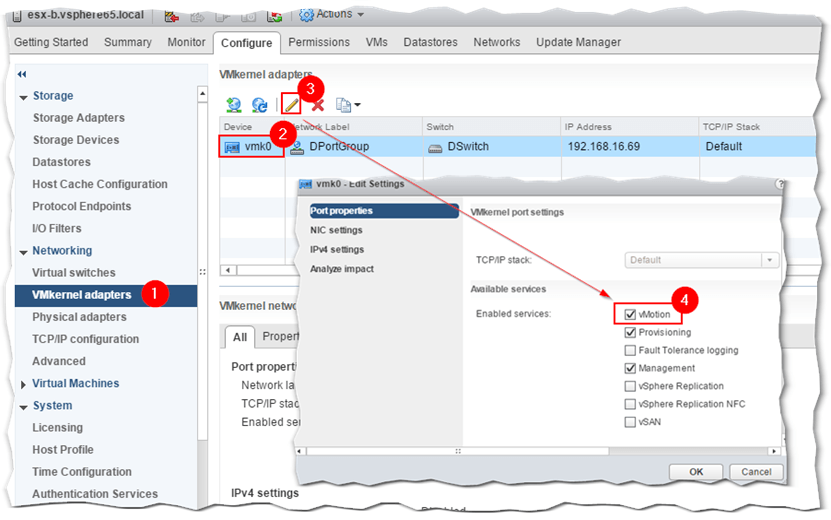

There are a few network requirements and best practices you need to be aware of before enabling vMotion. For improved performance and security, set aside at least one 1GbE network card for vMotion traffic. To activate the feature on ESXi, highlight the host in Navigator, click on the Configuration tab and enable the vMotion service on the default VMkernel adapter (vmk0) as shown in Figure 1. Additionally, you should set the VMkernel’s TCP/IP stack type to vMotion for best performance. The full details are available here and here.

Figure 1 – Setting up a VMkernel adapter for vMotion

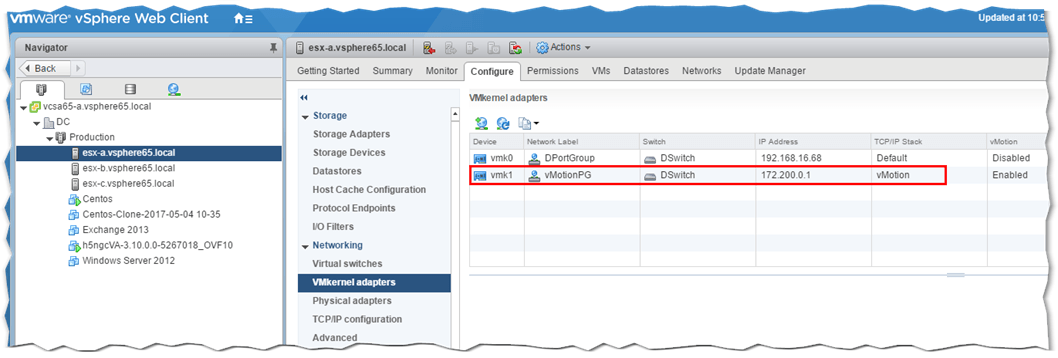

In my environment, I created a second portgroup and an associated VMkernel (vmk1) adapter on all three hosts which in turn are connected to a single distributed switch as per Figure 2.

Figure 2 – A vMotion dedicated portgroup and VMkernel

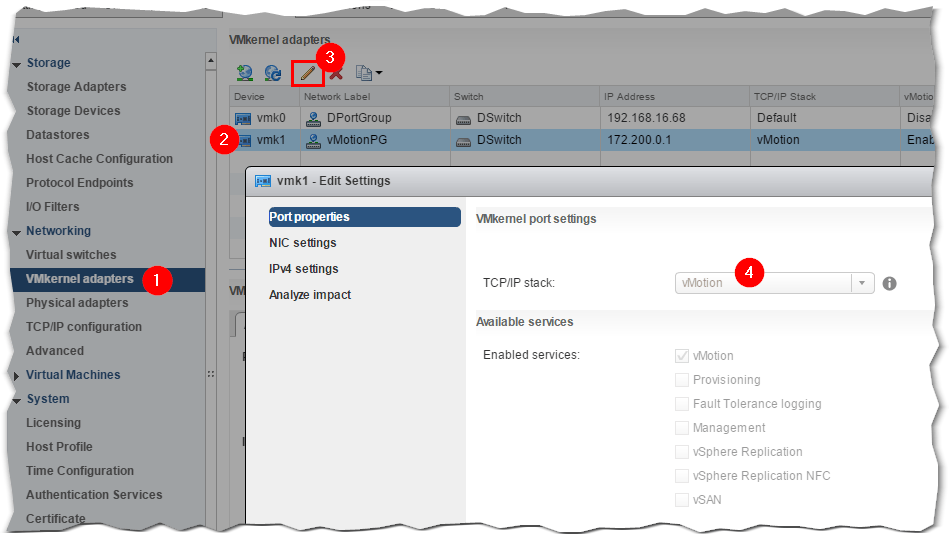

I also changed vmk1‘s TCP/IP stack to vMotion as opposed to the default one. To do this, select the respective ESXi host in Navigator and click on the Configure tab. As per Fig. 3, click on VMkernel adapters (1) and select the VMkernel (2) for which you want the stack type changed. After clicking on the Edit Settings icon, go ahead and select vMotion from the TCP/IP stack drop down box (4). Repeat the same process on any the other ESXi host where you want vMotion enabled.

Figure 3 – Changing the TCP/IP stack to vMotion for optimized performance

There are also some shared storage requirements that you must meet. I’ve picked these straight off VMware’s documentation.

- Configure hosts for vMotion with shared storage to ensure that virtual machines are accessible to both source and target hosts.

- During a migration with vMotion, the migrating virtual machine must be on storage accessible to both the source and target hosts. Ensure that the hosts configured for vMotion use shared storage. Shared storage can be on a Fibre Channel storage area network (SAN), or can be implemented using iSCSI and NAS.

- If you use vMotion to migrate virtual machines with raw device mapping (RDM) files, make sure to maintain consistent LUN IDs for RDMs across all participating hosts.

Refer to the vSphere Storage documentation for more information.

Conclusion

I’ve covered the basics of vMotion and hopefully you have come to appreciate its importance in relation to preventing downtime whenever urgent maintenance is called for. The advantages become even more apparent when vMotion is used in conjunction with DRS (Distributed Resource Scheduling) especially predictive DRS.

[the_ad id=”4738″][the_ad id=”4796″]

Not a DOJO Member yet?

Join thousands of other IT pros and receive a weekly roundup email with the latest content & updates!

2 thoughts on "Why IT Admins Adore vMotion.. And Why You Should Too"

Great article Jason.

Could you also explain the background process of vmotion (how the memory contents are copied from source host to destination host).

Thanks in advance.