Save to My DOJO

Containerization technology, brought to the limelight thanks to Docker, has been the talk of the day for quite some time and vSphere Integrated Containers is a testimony of this statement. Apart from being an industry standard, Docker also happens to be open-source. This fact may have compelled heavyweights like Microsoft and VMware to dive in and have a go at containerization themselves, in an effort to further boost their virtualization offerings.

VMware has released vSphere Integrated Containers taking a slightly different approach to containers. Note that the process has changed significantly over the lifecycle of vSphere Integrated Containers. You can find details in our blogs for VIC 1.2.1 and VIC 1.4.

Let’s take a brief look at containers and the spin VMware gave to this technology.

So, what is a container?![]()

Take a Linux operating system, virtualize it, and split it up into segments or user spaces. The Linux guest OS is said to be the container host. A container plugs in one of the available segments. While containers share the host’s kernel and allocated resources, in theory, they should be isolated from one another and the kernel itself. A container can run any process as long as it is supported on the Linux flavor installed on the container host. This means that you won’t be able to run Windows in a container running on a Linux-based container host. The latter, however, is perfectly feasible using Microsoft container technology.

A container also encapsulates things such as configuration settings, environment variables, and dependencies making it ideal to package and distribute an entire environment to developers, testers, and such, which is the main advantage. Portability is another advantage as a container can be run virtually anywhere. An often-cited benefit is a reduced need for virtual machines since containers, supposedly, make better use of system resources when compared like-for-like. Virtual machines tend to have higher overheads in terms of resource utilization.

What about Docker?![]()

Docker is an open-source containerization framework. Containers are created using base images residing on what’s called a Docker Hub, which is a registry or repository of Docker images. A Docker client (command-line tool) is used to connect to a Docker daemon or endpoint running on the container host. A Docker environment can be set up locally on Linux, Windows, Mac and VMware’s Photon OS or, if preferred, on a cloud platform such as Amazon’s AWS or Microsoft’s Azure. If you’re new to Docker, like I am, the best source of information is Docker’s own documentation website. There you’ll find guides, tutorials, and examples which should get you going in no time at all.

What are vSphere Integrated Containers?

VMware has very recently added vSphere Integrated Containers (VIC) to its line of features. vSphere Integrated Containers gives a new twist to the overall concept since containers are deployed as virtual machines as opposed to OS slices. The virtualized container host (VCH), which as you’ve guessed comes virtualized, provides the same Docker endpoints which you’d normally connect to using traditional Docker clients.

If you’re wondering about vSphere integrated containers vs Kubernetes; those are two very different things. VIC lets you run containers in VCH and manage them in vSphere while Kubernetes is a complete container orchestration platform.

So why did VMware go down this route? Paraphrasing, there are a number of advantages to this approach:

vSphere, not Linux, is the container host:

-

- Containers are deployed as VMs, not in VMs.

-

- Every container is fully isolated from the host and from the other containers.

-

- vSphere provides per-tenant dynamic resource limits within a vCenter Server cluster

vSphere, not Linux, is the infrastructure:

-

- You can select vSphere networks that appear in the Docker client as container networks.

-

- Images, volumes, and container state are provisioned directly to VMFS.

vSphere is the control plane:

-

- Use the Docker client to directly control selected elements of vSphere infrastructure.

-

- A container endpoint Service-as-a-Service presents as a service abstraction, not as IaaS.

In addition, vSphere Integrated Containers is comprised of the following components (source: VMware):

-

- vSphere Integrated Containers Engine: A container engine that is designed to integrate all the packaging and runtime benefits of containers with the enterprise capabilities of your vSphere environment.

-

- vSphere Integrated Containers Registry: A Docker image registry with additional capabilities such as role-based access control (RBAC), replication, and so on.

I suggest reading this guide for further details on the architecture, use case examples, and how to deploy vSphere Integrated Containers. The VMware VIC documentation site is also a good source of information.

vSphere Integrated Containers Requirements

VIC can be deployed to standalone ESXi hosts as well as vCenter Server. In this post, I’ve chosen to deploy vSphere Integrated Containers to a standalone ESXi 6.5 host set up as a nested instance on Workstation Pro. Likewise, I’ll be running the Docker client off a Centos 7 VM again running on Workstation Pro. The basic requirements before proceeding are as follows.

Network

-

- A DHCP server. In my case, DHCP services are handled by VMware Workstation this being one reason why I opted for it.

-

- Allow outbound TCP traffic to port 2377 on the endpoint VM, for use by the interactive container shell.

-

- Allow inbound HTTPS/TCP traffic on port 443, for uploading to and downloading from datastores.

vSphere

-

- vCenter Server 6.0 or 6.5, managing a cluster of ESXi 6.0 or 6.5 hosts, with VMware vSphere Distributed Resource Scheduler (DRS) enabled OR

-

- vCenter Server 6.0 or 6.5, managing one or more standalone ESXi 6.0 or 6.5 hosts OR

-

- Standalone ESXi 6.0 or 6.5 host that is not managed by a vCenter Server instance AND

-

- A vSphere Enterprise Plus license

Depending on the type of vSphere environment you’re deploying to, different requirements must be met. You’ll find these listed under the Environment Prerequisites for VCH Deployment section of the installation guide.

vSphere integrated containers installation

It’s time to find out how to install vSphere integrated containers. In this walkthrough, you’ll learn how to set up vSphere Integrated Containers on ESXi 6.5 as well as use the Docker client to create your first container. Needless to say, don’t forget to replace any IP addresses, user accounts, resource names and what not shown with your own!

Step 1 – Install ESXi 6.5. You’ll need a datastore, say 10GB, and a standard network switch set up.

Step 2 – Download the vSphere Integrated Containers Engine.

If you don’t have one, sign-up for an account at my.vmware.com and download the VMware vSphere integrated containers engine bundle from here. Extract the archive to a folder on your workstation. I’ll be using Windows for this step.

Figure 1 – Downloading the vmware vsphere integrated containers engine from my.vmware.com

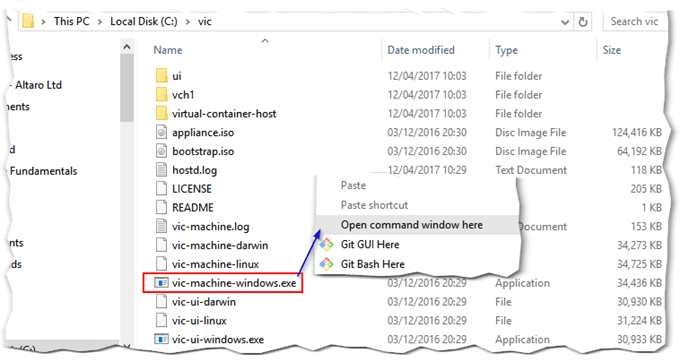

Step 3 – Using vic-machine

Vic-machine is the command-line tool used to deploy VCH to vSphere. It can also be used to delete VCH instances among other things.

Open a command prompt and switch over to the folder holding the extracted contents. Look for the vic-machine-windows.exe file. We will be using this to install the virtual container host (VCH) on ESXi. The command-line tool is also available for Linux and Mac using vic-machine-linux and vic-machine-darwin respectively both of which you’ll have to copy over to your OS of choice.

Figure 2 – Running vic-machine on Windows

Step 4 – Create the Virtual Container Host (VCH)

First, run the command below to get a hold of the ESXi certificate thumbprint. As arguments, you also need to specify the ESXi’s IP address, a datastore name and a user. I’ve used root, the password for which can be included as an argument or simply typed in at the console.

vic-machine-windows create –target 192.168.207.130 –user root –image-store iSCSI-DS2 –no-tls

Figure 3 – Obtaining the ESXi host’s certificate thumbprint

Now that we have the thumbprint, we can re-run the same command but with the added thumbprint argument as follows:

vic-machine-windows create –target 192.168.207.130 –user root –image-store iSCSI-DS2 –no-tls –thumbprint 80:E6:F9:15:3B:E8:E1:99:18:17:6A:A8:AD:5C:3E:AF:FF:AB:B8:35

Make sure that DHCP is working since the VCH will try and acquire an IP address while it boots up. If the requirements have all been met, your output should look like that in Fig. 4. Label 1 indicates the stage at which the installer checks if VCH has acquired an IP address. If it does not, the process fails and halts.

The info labelled 2 to 5, tells us that the VCH was successfully deployed. Label 3 highlights an environment variable that you can optionally set on the machine running the Docker client. This voids the need to type in the Docker endpoint IP:port combination any time you run a Docker command (see below).

Figure 4 – Creating a virtual container host using vic-machine

In Fig. 5, I simply verified that the VCH is reachable over port 2378 as per the URL labelled 2 in Fig.4 above. The VCH admin portal also reports back health status and affords access to a number of log files.

Figure 5 – The admin portal for VCH

From an ESXi perspective, you should see a new VM called virtual-container-host listed in the inventory.

Figure 6 – A VCH deployed as a VM on ESXi 6.5

Step 5 – Set up a Docker client

I am running the Docker client on a Centos 7 VM. You can follow the instructions outlined below to install Docker CE on Centos. The procedure installs everything required to host and manage Docker containers on Centos. This, of course, includes the server-side of Docker should you want to learn more about Docker in general. Today, however, our focus is on VIC, so all we need to be concerned about is the Docker client.

Note 1: If you have a Windows 10 Pro-64-bit, you can download the client from here. I don’t have it, so I opted for Linux which is more fun anyway!

Note 2: The Docker client is simply a command-line tool used to create and manage Docker containers amongst other things.

To set up Docker on Centos 7 64-bit, run the following commands. The procedure first removes any previous instances of Docker and reinstalls it from scratch. Start off each command with sudo if you’re logged in with a non-admin user account.

yum remove docker docker-common container-selinux docker-selinux docker-engine

yum-config-manager –add-repo https://download.docker.com/linux/centos/docker-ce.repo

yum makecache fast

yum install docker-ce

Step 6 – Configure the Docker client environment

Next, we need to set the correct Docker client API version to match that used by the vSphere Integrated Containers Engine. To do this, just run the following:

export DOCKER_API_VERSION=1.23

Optionally, set the DOCKER_HOST var as follows. If omitted, you must include -H <Docker IP:Port> to every Docker command run.

export DOCKER_HOST=192.168.207.131:2375

In Fig. 7, I’ve set the correct API version and ran docker info to verify the Docker endpoint on ESXi is reachable.

Figure 7 – Setting Docker environment variables on Centos

Step 7 – Create your first virtual integrated container (VIC)

As an example, I’ve deployed a container running the nginx webserver. Note, that the run parameter will automatically connect to a Docker Hub (repository) from where it pulls down the corresponding Docker image which in turn is used to create the container. The -p argument, tells the Docker endpoint to publish port 80 for the container just created which I’ve called nginx1. The nginx bit at the end of the command is in fact the search argument submitted to the Docker Hub.

docker -H 192.168.207.131:2375 run -d -p 80:80 –name nginx1 nginx

Figure 8 – Creating and running a nginx container

Fig. 9 shows how the container is in fact created as a VM.

Figure 9 – A container running as a VM on ESXi 6.5

Step 8 – Verify access to the service published by the container

I should now be able to access the landing page on the nginx web server running on the container by browsing to the VCH’s IP address on port 80. Success!

Figure 10 – nginx web server running on a container accessed via a published port

You can find more details on how to run workloads in our dedicated blog.

That’s all there is to it. The same procedure applies when deploying VCH to vCenter, the only difference being vApps are used to group the VCH and corresponding containers. From there on, it’s really a matter of familiarizing oneself with Docker concepts, command-line arguments and so on. Here are a couple of examples on how to user the Docker client.

Ex. 1 – List all the containers managed by the respective VCH along with any published network ports.

docker -H 192.168.207.131:2375 ps

Ex. 2 – Restarting the nginx container.

docker restart nginx1

To properly protect your VMware environment, use Altaro VM Backup to securely backup and replicate your virtual machines. We work hard perpetually to give our customers confidence in their VMware backup strategy.

To keep up to date with the latest VMware best practices, become a member of the VMware DOJO now (it’s free).

Conclusion

vSphere Integrated Containers is VMware’s novel method of deploying containers. It gives vSphere administrators the edge as it allows container provisioning within the datacenter using existing virtualization platforms. Additionally, since vSphere containers are in actual fact virtual machines, a further benefit is derived from features such as DRS, HA, and vMotion for a more robust containerization platform something lacking using traditional models.

I will definitely keep an eye on any future developments in this area so I invite you to keep watching this space for new posts.

Not a DOJO Member yet?

Join thousands of other IT pros and receive a weekly roundup email with the latest content & updates!