Save to My DOJO

This article will run down the hardware requirements for Storage Spaces Direct. Since Storage Spaces found its way into Windows Server with Windows Server 2012, much has changed for Microsoft Strategies regarding supported hardware.

Read More About Storage Spaces Direct

What is Storage Spaces Direct?

3 Important Things You Should Know About Storage Spaces Direct

In the first attempt, Microsoft gave customers a wide range of options to design the hardware part of the solution themselves. While this enabled customers to build a Storage Spaces Cluster out of scrap or desktop equipment, to be honest, we ended up with many non-functional clusters during that period.

After that phase and with the release of Windows Server 2016, Microsoft Decided to only support validated system configurations from ODMs or OEMs and no longer support self-built systems. For good reason!

Storage Spaces Direct Hardware Requirements

Let’s get into the specific hardware requirements

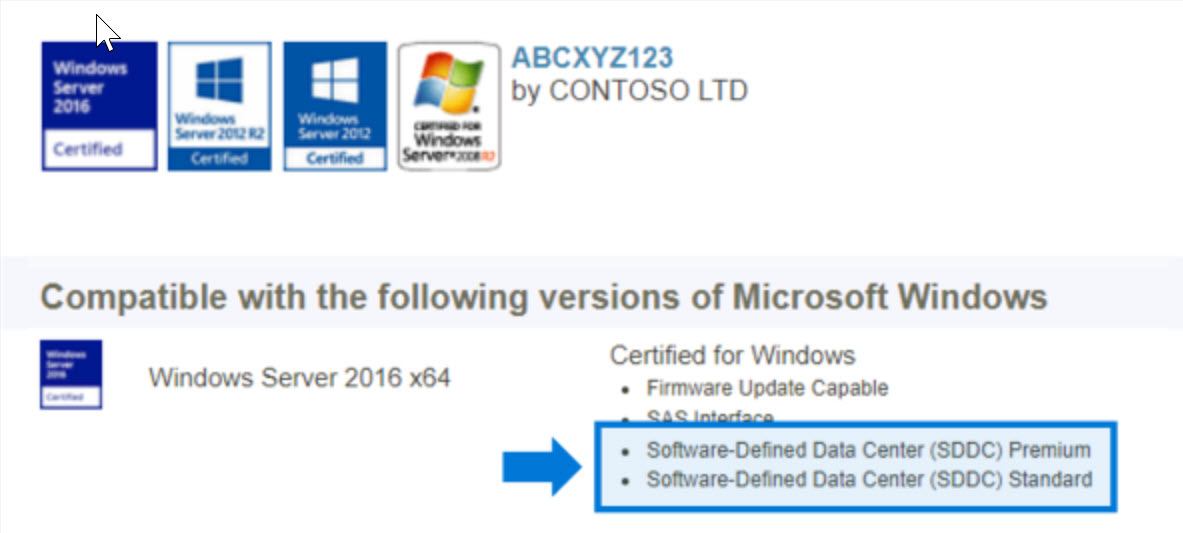

First off, every driver, device or component used for Storage Spaces Direct needs to be “Software-Defined Datacenter” compatible and also be supported for Windows Server 2016 by Microsoft.

Servers

- Minimum of 2 servers, maximum of 16 servers

- Recommended that all servers be the same manufacturer and model

CPU

- Intel Nehalem or later compatible processor; or

- AMD EPYC or later compatible processor

Memory

- Memory for Windows Server, VMs, and other apps or workloads; plus

- 4 GB of RAM per terabyte (TB) of cache drive capacity on each server, for Storage Spaces Direct metadata

Boot

- Any boot device supported by Windows Server, which now includes SATADOM

- RAID 1 mirror is not required, but is supported for boot

- Recommended: 200 GB minimum size

Networking

Minimum (for small scale 2-3 node)

- 10 Gbps network interface

- Direct-connect (switchless) is supported with 2-nodes

Recommended (for high performance, at scale, or deployments of 4+ nodes)

- NICs that are remote-direct memory access (RDMA) capable, iWARP (recommended) or RoCE

- Two or more NICs for redundancy and performance

- 25 Gbps network interface or higher

Drives

Storage Spaces Direct works with direct-attached SATA, SAS, or NVMe drives that are physically attached to just one server each. For more help choosing drives, see the Choosing drives topic.

-

SATA, SAS, and NVMe (M.2, U.2, and Add-In-Card) drives are all supported

-

512n, 512e, and 4K native drives are all supported

-

Solid-state drives must provide power-loss protection

-

Same number and types of drives in every server – see Drive symmetry considerations

-

Cache devices must be 32 GB or larger

-

When using persistent memory devices as cache devices, you must use NVMe or SSD capacity devices (you can’t use HDDs)

-

NVMe driver is Microsoft’s in-box or updated NVMe driver.

-

Recommended: Number of capacity drives is a whole multiple of the number of cache drives

-

Recommended: Cache drives should have high write endurance: at least 3 drive-writes-per-day (DWPD) or at least 4 terabytes written (TBW) per day – see Understanding drive writes per day (DWPD), terabytes written (TBW), and the minimum recommended for Storage Spaces Direct

You can find more hardware information at Microsoft Docs. You can also get more details about supported disk configurations etc. at that site.

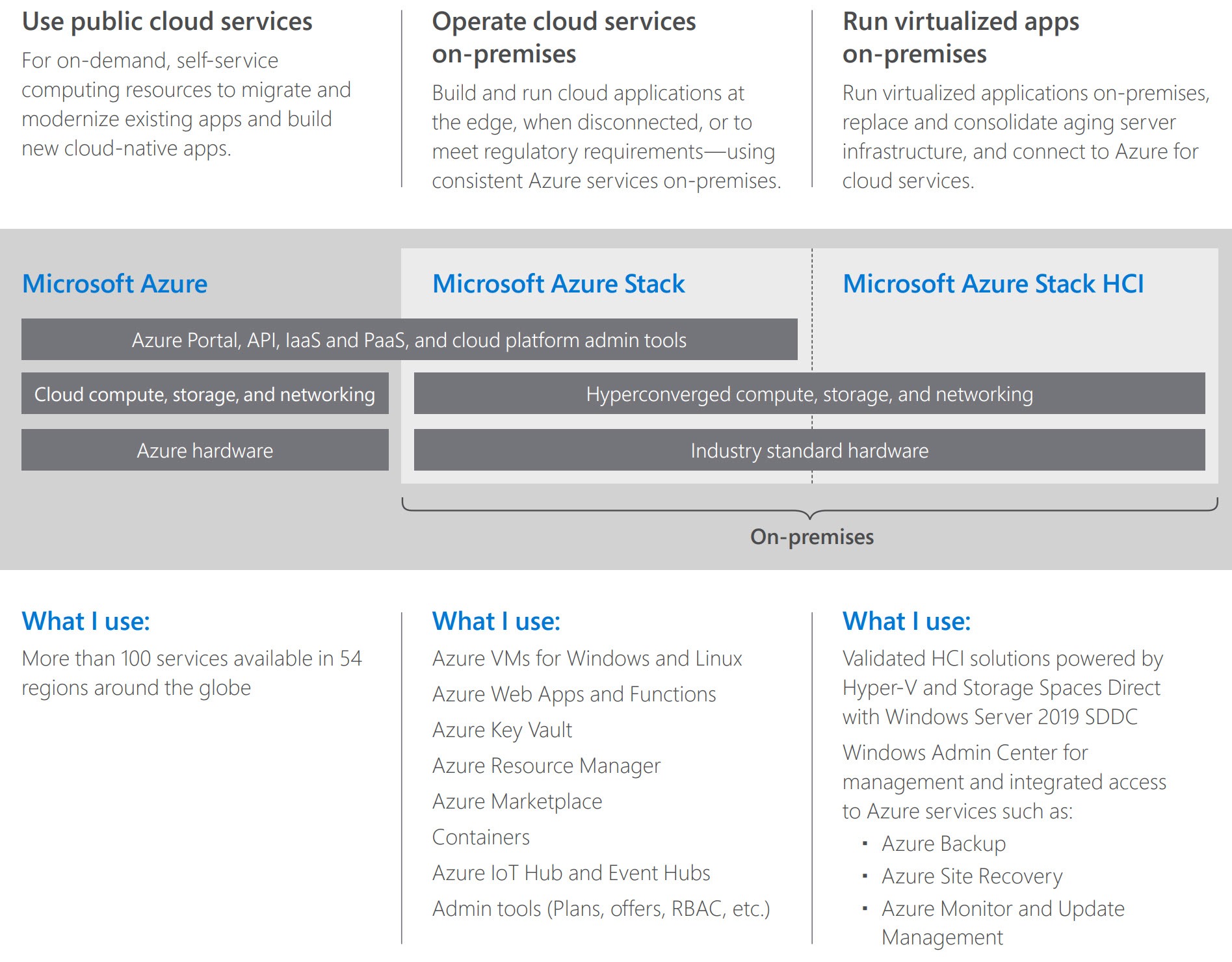

Azure Stack HCI (Hyper-Converged Infrastructure)

To make it easier for the customer to choose when it comes to vendors and systems, Microsoft now combines supported Storage Spaces and Hyperconverged Systems under the label Azure Stack HCI. Despite the name Azure in the label, you will not need to buy a full-blown Azure Stack deployment in order to have Storage Spaces. However, with Windows Server 2019, Microsoft has made it much easier to find supported appliances for Storage Spaces and Hyperconverged deployments with that label.

When following Microsoft’s guidance, Azure Stack HCI is the starting point for the next generation Software-Defined Datacenter, where Azure (the public cloud) is at the end of the road.

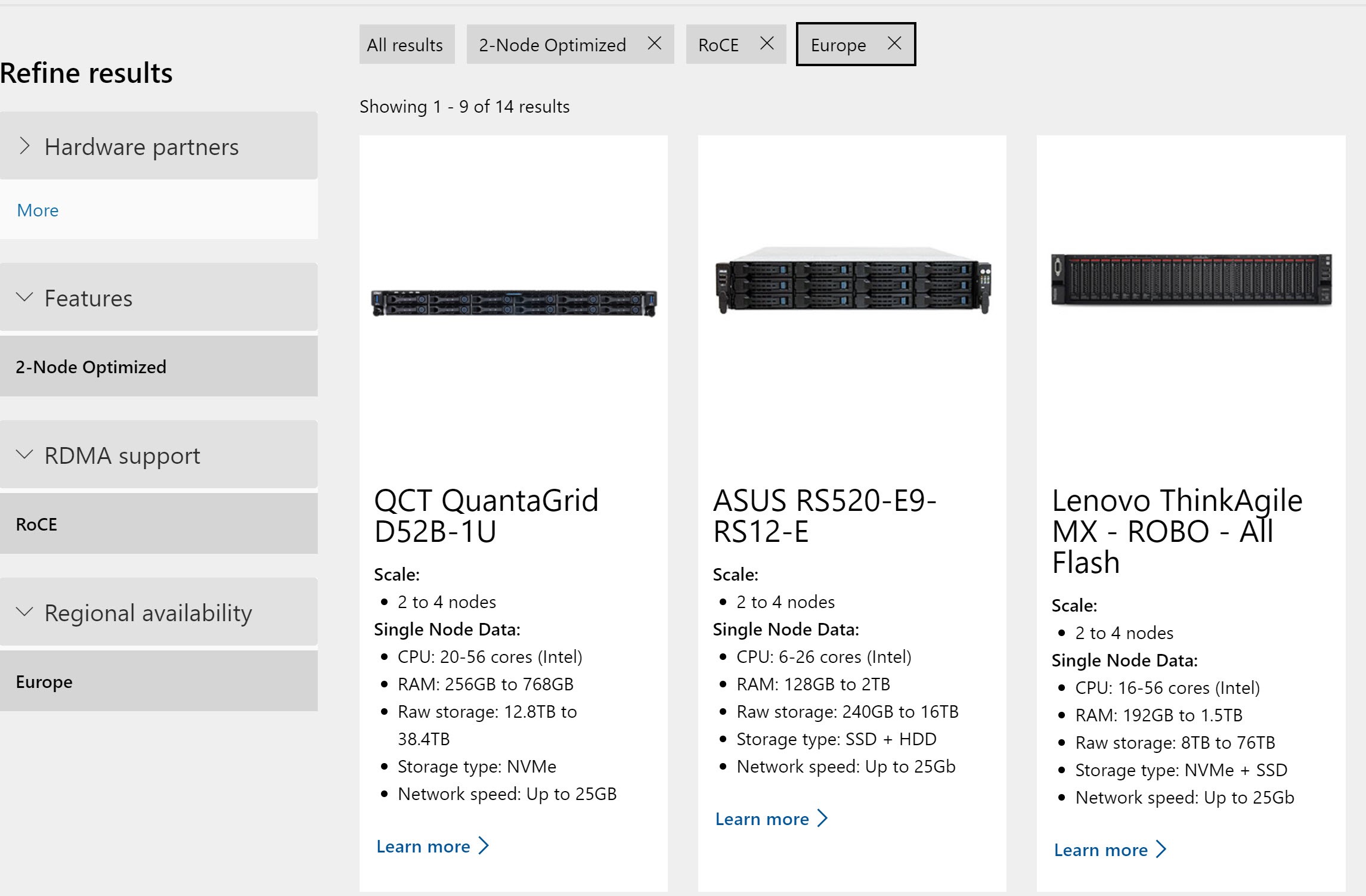

To make it easier to find the right vendor and system for you, Microsoft has published the Azure Stack HCI catalog.

Here you can filter effectively and search for your organization’s requirements. This tool makes it super easy to see which vendor can offer hardware you may be targeting.

For example, I was looking for a system with the following requirements for a customer branch office:

- Regional available in Europe

- 2-Node Optimized

- RDMA RoCE

As you can see in the screenshot, I got a result of 14 possible systems. Now I can contact the vendors for additional information and sizing.

When it comes to sizing, you should work together with your hardware vendor to get the best possible configuration. Disk sizes, types, etc. are still different from vendor to vendor and system to system.

To help you to validate the configurations or have some kind of the first idea what you need, Microsoft published a guide in their documentation named Planning volumes in Storage Spaces Direct. Additionally, one of the Program Managers for S2D, Cosmos Darwin, has published a small calculator as well.

Wrap Up

This blog post will give you a better idea of what kind of hardware you’ll need to get your hands on if you want to use S2D. This post is a critical part of putting together a successful S2D deployment. In the next part of this series on Storage Spaces Direct, we will focus more on architecture with S2D and also on the competitors on the market.

Thanks for reading!

Not a DOJO Member yet?

Join thousands of other IT pros and receive a weekly roundup email with the latest content & updates!