Save to My DOJO

In the earlier parts of this series, we got an overview of Storage Spaces Direct (S2D) and the technologies behind it. In today’s segment, we’re going to speak about Converged and Hyperconverged Clustering, the beginnings of S2D, and licensing. Let’s get going!

Where Did S2D Come From?

S2D is a good example of Microsoft’s Cloud First Strategy in development. When Microsoft was starting with Azure Infrastructure as a Service, there was no permanent storage for virtual machines. Persistent storage for Azure Virtual Machines was introduced later in 2013/14. Platform as a Service (PaaS) and Software as a Service (SaaS) offerings replicated their storage via application clustering but not via storage replication.

To stay current with competitors, Microsoft needed to find a solution that could work with cheap hardware and without expensive Storage Area Network (SAN) technology. The solution was to integrate the storage into the same server farms running the VMs. At the beginning of S2D, Microsoft used shared JBODs via Storage Spaces in Window Server 2012/R2. In later developments, Microsoft went away from shared JBODs and only used local storage disks. Since then, all stored information is replicated within a storage pool running on different servers within a region inside of Azure.

To go a bit further, with the Open Compute Project Olympus, Microsoft introduced the new generation of Cloud Servers. You can find some more information in the following announcement: https://azure.microsoft.com/de-de/blog/microsoft-reimagines-open-source-cloud-hardware/. It’s also worth noting that Microsoft is heavily investing in storage disks and SSDs. With the Open Compute Project Denali, Microsoft has introduced their first self invented SSDs, which will be used in the Microsoft Cloud in the future. https://azure.microsoft.com/de-de/blog/project-denali-to-define-flexible-ssds-for-cloud-scale-applications/

When Microsoft was starting with Azure (and then again with S2D in Azure), they weren’t working with the Open Compute Project. They started with the most common customer hardware supplied by vendors like Dell, HP, and Lenovo. For example the Dell PowerEdge R720XD and PowerVault MD3060e or even the PowerEdge-C Series. They were customer-built for Microsoft and other Cloud Providers. In order to be more efficient in storage, Microsoft today does not use any SAN technology. Microsoft uses pure converged infrastructure, software-defined storage and network.

Within this next part, we will start to speak about the differences between converged and hyper-converged infrastructures. You will get some pros and cons from both and some usage scenarios. Let’s take a look!

Converged vs. Hyper-Converged Infrastructure

When you think about converged or hyper-converged infrastructure you basically can think about a box where you drop in your classic infrastructure components like SAN, Compute, Hypervisor, and Network.

When looking at S2D and Microsoft’s concept, you have two kinds of converged. One is the converged infrastructure, where you have two kinds of converged systems.

- For Storage, you leverage Scale-Out File Server with S2D

- For Virtualization, you use for example Hyper-V which uses the Scale-out Fileserver as a storage device

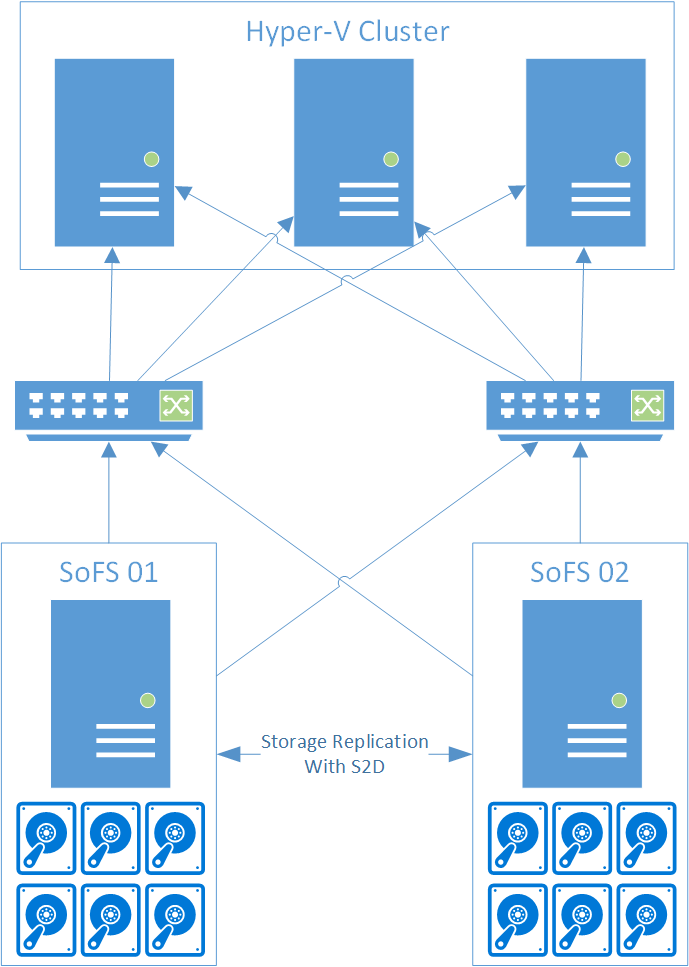

In the following picture, you’ll see an example of converged infrastructure.

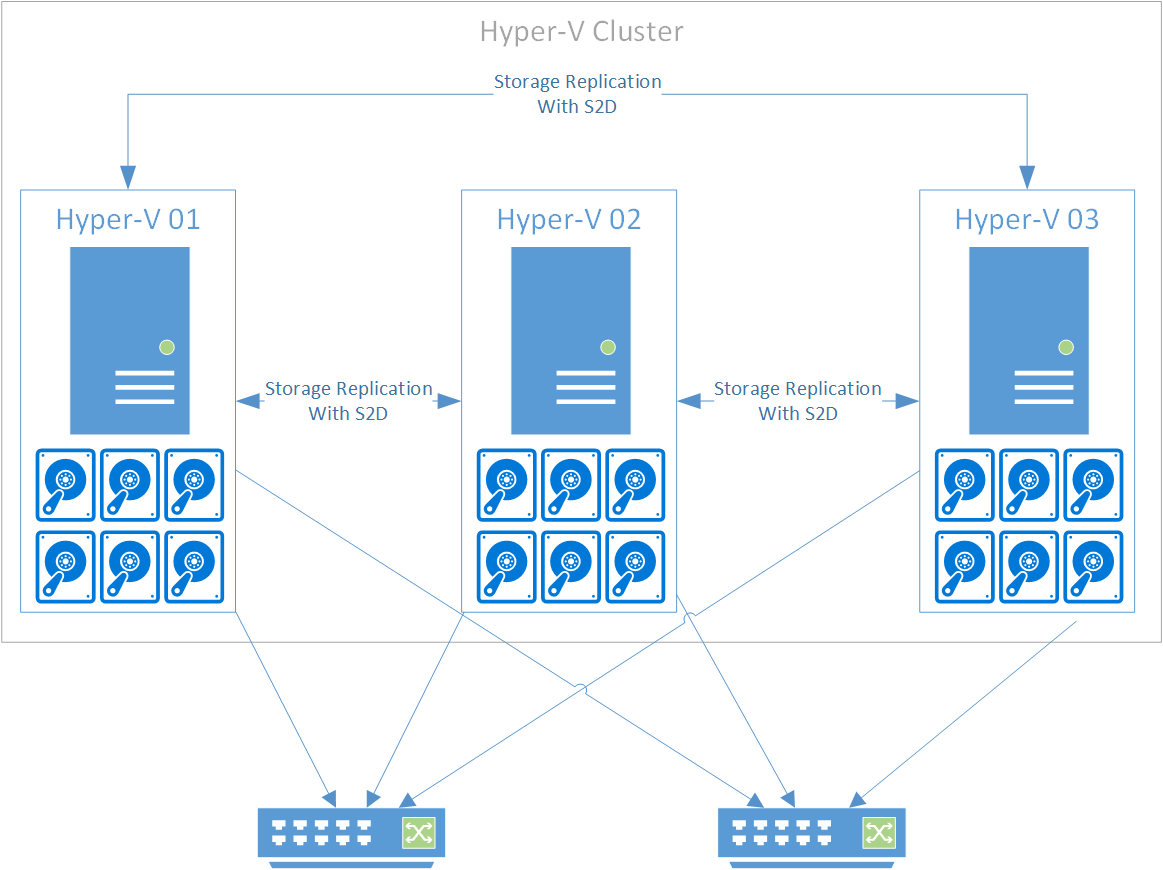

In the other option, you have what is called hyper-converged infrastructure. Here you put everything in one box, including the hypervisor and the necessary network and storage components. The picture below shows an example.

Both architectures have their up- and downsides and usage scenarios.

Hyper-Converged

| Upside | Downside |

|---|---|

| Very small hardware footprint and fewer systems needed | All systems need to be equal from hardware sizing and equipment |

| Reduced administrative effort to manage the systems | If hardware needs to be upgraded (e.g. Storage or Memory) all systems need to upgraded at the same time |

| Great for workloads which have an equal usage of storage,

memory and compute |

If you started with two small servers, you may reach the upgrade limit very quickly.

So you must plan properly for a scale up or scale out scenario. |

| Only one Hyperconverged Cluster at a time |

Converged

| Upside | Downside |

|---|---|

| Very flexible because Hypervisors and Storage nodes can be scaled separately. | Bigger Hardware Footprint |

| Easier to deploy and to plan when adding new nodes or clusters. | More Systems to manage |

| One Storage Cluster and Hypervisor Clusters from different vendors possible.

Hypervisor Clusters can use Storage Clusters as a resource for storage. |

Now you may ask when you should use converged and when to use hyper-converged infrastructure. Let me explain some scenarios where you would use one over the other.

Hyper-converged (again, everything in one box) is a perfect fit for branch offices or locations like factories, where there is a need for certain IT services but you want to keep a small hardware footprint. You can also use hyper-converged infrastructure as a frontend for hybrid cloud services like Azure Files or Azure SQL to reduce latency and optimize specific workloads on-premises.

Converged scenarios instead should be used, when you have a bigger hardware footprint, like when you are hosting lots of applications on-prem or if you are a service provider in hosting or similar. In those scenarios, you often have workloads which do not scale equal when it comes to CPU, Memory, Storage or Network. Here you can scale the different parts of the infrastructure individually. If you need more Memory for your virtualization clusters, you can scale Memory of your hosts without touching the storage. If you need to increase storage capacity or throughput, you can add more storage hosts, disks, or even add more network cards to the storage nodes.

The Converged route is also great when you have a mix of virtualization technologies. You can use S2D with iSCSI, SMB or NFS from a Windows Server, which also gives you the option to connect non-Microsoft Hypervisors. With that, you have a very flexible infrastructure to enable all kinds of workloads.

How to License Storage Spaces Direct

The licensing of Storage Spaces Direct is pretty easy. To use Storage Spaces Direct you need to have Windows Server Datacenter Edition.

Source: https://docs.microsoft.com/en-us/windows-server/get-started-19/editions-comparison-19

Now you would think, you that’s pretty expensive for a feature which I want to use only at a branch location. Yes, that is possibly right but not one hundred percent correct because there is something else you get on top of the datacenter license.

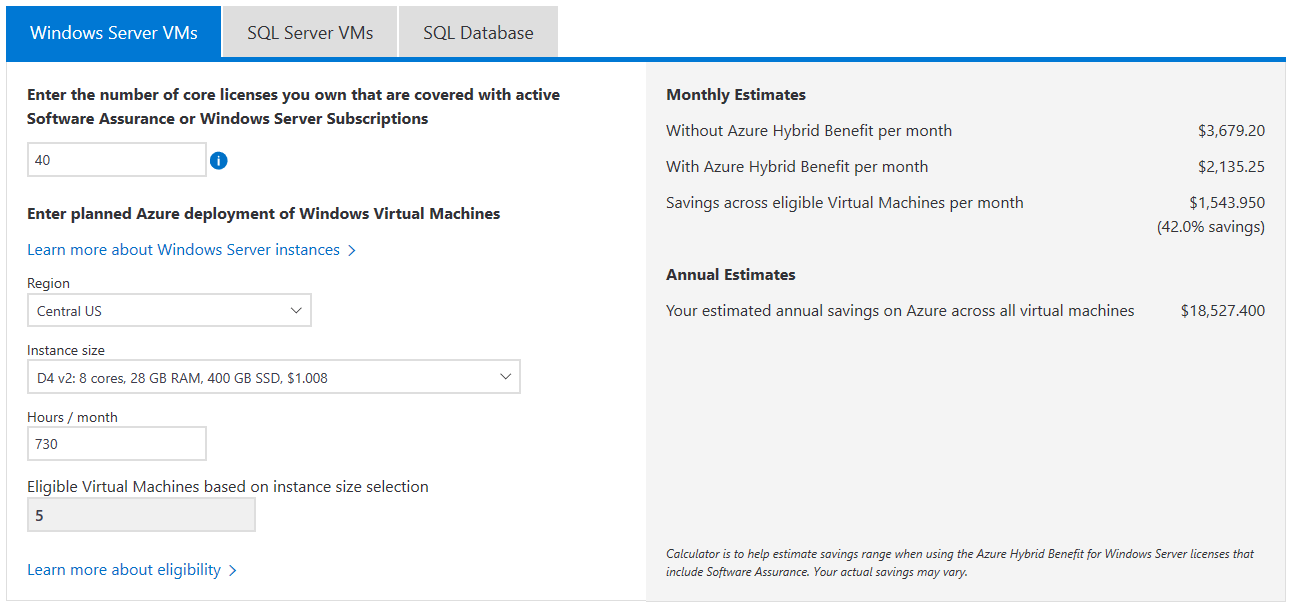

When you use Windows Server 2019 with Software Assurance or a Windows Server Subscription, you have license mobility and Hybrid Use Benefits. Hybrid Use Benefits enable Microsoft customers to use their Windows Server Datacenter Licenses on-prem and in Azure. The Azure Hybrid Benefit helps Microsoft customers to save up to 40 percent on virtual machines. I need to say, the saving is dependent on virtual machine size and region. Windows Server virtual machines in Azure will then have lower base compute rate equal to Linux virtual machine rates. If you want to learn more about Hybrid Use Benefits, you should follow the link. https://azure.microsoft.com/en-us/pricing/hybrid-benefit/

On that page, you will also find a cool cost calculator for the benefits.

In the next part of the series, I will write about the hardware requirements and how to design and implement S2D infrastructure.

Wrap-Up

What about you? Have you dug into S2D yet? Have you decided whether Converged or Hyper-Converged infrastructure works better for your organization? We’d love to hear about it with all the nitty-gritty details in the comments section below!

Thanks for reading!

Not a DOJO Member yet?

Join thousands of other IT pros and receive a weekly roundup email with the latest content & updates!

13 thoughts on "3 Important Things You Should Know About Storage Spaces Direct"

Great article.

You list this as a downside to Hyper-V converged:

“Only one Hyperconverged Cluster at a time”

What does that mean exactly? For example I have 4 servers. They are paired servers with the same specs. So I could only create one cluster in our domain for 2 of the servers? My though was to create two clusters for each set of servers.

Hi Robert, I mean only one set of servers to join a cluster and you cannot mix hardware. Yes you are correct you can built multiple clusters but the cluster will for example not be able to use hyperconverged mode to keep resources available outsite of their specific cluster. So no cluster to cluster live availability. Cheers, Flo

Altaro does not even support S2D so why you are making this kind of blog posts about S2D?

Hi Jussi,

because Altaro has a high interest in sharing knowledge through out the community. Altaro has also no primary focus on Azure or Azure Stack but allows us authors to use their platform to spread the knowledge.

I really like that open approach and would like to see more companies working like that.

Cheers,

Flo