Save to My DOJO

In the previous parts of this series, our focus was on the basic concepts and theory involved with storage. With this installment, we’ll look at some of the ways you can approach your own storage challenges.

Part 1 – Hyper-V storage fundamentals

Part 2 – Drive Combinations

Part 3 – Connectivity

Part 4 – Formatting and file systems

Part 5 – Practical Storage Designs

Part 6 – How To Connect Storage

Part 7 – Actual Storage Performance

Overview

There is no one single right way to design storage for a Hyper-V environment. It’s certainly possible to build a storage solution that uses more than one component. In this way, you can employ high-performance hardware to satisfy needs of high-performance virtual machines while allowing slower and lower-cost storage equipment to handle your more mundane tasks.

All Internal/Local Storage

If it’s not obvious, this is really only viable for non-clustered systems. There are some third-party tools that allow for storage on a node to serve virtual machines for the cluster, but this goes a long way toward defeating the purpose of clustering: preventing any single node from bringing the cluster down. Some of these tools can also mirror the content of the internal storage in real-time, but the cost of doing so often approaches that of simply using some form of external storage.

In general, I prefer using an installation of the free Hyper-V Server above Windows Server, although the reasoning is a subject for another post. However, something came to my attention during a conversation with Jeff Hicks: if you want to use Microsoft’s deduplication solution and all your VMs are on local storage, you’ll have to use Windows Server. Hyper-V Server does not include this functionality. If your VMs are hosted by another system, then that system’s capabilities will determine your VMs’ storage profile.

A serious consideration for this is VDI. This is a prime application for both Hyper-V Server and deduplication, as the server component needs no licensing and VDI can often get a high degree of savings from deduplication. VDI is also the primary solution that Microsoft’s storage team had in mind when updating deduplication for virtualization loads in Windows Server 2012 R2. If you’re going to be implementing VDI and Microsoft deduplication is desired, consider using a file server on Windows Server 2012 R2 to store them.

Mixing Local and Remote

This is a solution I use on a regular basis. In a cluster, not all virtual machines need high availability protections, and some do well if decoupled from the domain requirements of a cluster. As a prime example, I run domain controllers on local storage. This ensures that if remote storage or connectivity to it is lost, the domain controller will continue to function. Another application can work for is System Center Virtual Machines Manager 2012 R2 with multiple VMs on separate hosts running SCVMM R2 in high availability mode.

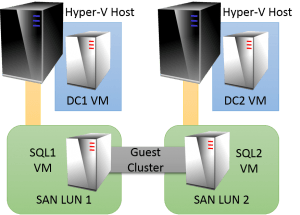

Even if the host isn’t clustered, the same principles apply. You may have a guest clustered SQL Server that runs on separate Hyper-V hosts. You can install local domain controllers on each host but place the SQL Servers on fast external storage devices. As an aside, there are now a number of ways to configure SQL Server for redundancy across two or more guests besides using a guest cluster; this post is not about SQL Server so I will not spend any time on any SQL deployment options. Consult a SQL Server expert source for more details.

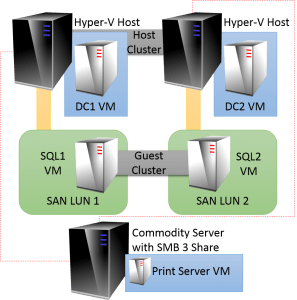

Mixing External Types

Extending on the concepts in the previous section, you can also mix external storage types. For instance, let’s say that you add a standard print server to the above build and decide to create a cluster of the two Hyper-V hosts. There’s no need to force the domain controllers on to shared storage or convert the SQL Servers to highly available VMs. The exception would be if you also convert the locations labeled SAN LUN 1 and/or SAN LUN 2 to cluster disks or Cluster Shared Volumes (CSVs). Even though it will work perfectly well to have a non-highly available virtual machine in a shared location, Microsoft technically doesn’t support that configuration. All you have to do is not add those LUNs to the cluster’s disks and you will remain in a supported configuration. If you do make the LUNs available to the cluster, then you will also need to convert the SQL Server guests to be highly available.

The print server role can no longer be clustered as of Windows Server 2012. Realistically, this is a good thing. Anyone who’s ever administered a Windows print cluster can tell you that it is a perfectly miserable experience. Starting with Windows Server 2012, the way to make Microsoft’s print spooler highly available is by running it inside a highly available virtual machine. This works out very well, as it’s very tough to really have a print server that uses a great deal of compute resources. Print devices are ridiculously slow in comparison to most anything else in the world of computing, so a print server is often able to control a great many devices without straining itself. Also, the spooler service hits a cap of a few thousand print queues in which it simply cannot effectively manage any more. Adding RAM, disk, or CPU to that system will make no difference whatsoever. What that means is that you’ll just never have a print server that demands much from its hardware. So, if your print server must be in a clustered Hyper-V Server environment to be highly available but will never place a serious demand on your disk, why would you want to place it on high speed storage? The only two acceptable answers are, “We have plenty of leftover space on high speed storage,” and, “We don’t want to place it on high speed storage.” Assuming the latter, you could reconfigure the above so that it looks like the following:

Extending the Building Blocks

As you can see, your Hyper-V Server storage can be very flexible. But, you certainly haven’t seen everything. The biggest thing I’d like to highlight here is the way that SMB 3 storage can be expanded. The diagram at the end of the last section shows a single server with a single shared location. There’s nothing inherently wrong with that. But, that one server represents a single point of failure. If it goes down, so does your print server. For a print server, that’s probably not a big deal as long as you can resolve the issue quickly. Since it’s virtualized, you can restore a backup of the VM to internal storage on one of the hosts or to the shared storage as quickly as it takes for the bits to be copied from your backup media. That’s a lot faster than trying to restore a physical print server.

But, even that may not be fast enough. You may want to put more protection, but not want to spend the money necessary for a high-end SAN device with lots of internal redundancy. Fortunately, you’ve got options. For the above scenario, my first choice would be Hyper-V Replica. Have a standalone standby system with inexpensive internal or direct-attached storage. Replicate the print server to it and have it running an active domain controller. This can be accomplished with only the cost of the hardware and a single Windows Server Standard Edition license (assuming these are the only two VMs, both are running a Standard Edition of Windows Server, and the host performs no other roles).

You can also use SMB 3/SOFS a front-end for your SAN devices. The benefit here is that you can expose storage that anyone can use, controlled by familiar NTFS permissions. Virtual machines can be deployed without tapping a storage administrator to provision a new LUN. If you’re in a smaller environment without a dedicated storage admin, you may not use your SAN often enough to feel really comfortable with creating and configuring LUNs every few months when a new deployment need arises.

Summary

The options presented here are just a few ideas to get your design strategy started. It is by no means all-inclusive and I doubt that anything that fits in a blog post ever will be. Feel free to leave any other approaches that you’ve discovered in the comments, as they’ll no doubt be useful to others.

In the sixth part of this series, I’ll jump into some how-tos so you can see how to connect your storage successfully.

Not a DOJO Member yet?

Join thousands of other IT pros and receive a weekly roundup email with the latest content & updates!

4 thoughts on "Storage and Hyper-V Part 5: Practical Designs"

Really a great series of articles! Would like to read about a small Hyper-V Storage “real-world-deployment” in your next part:

2 x Hyper-V Hosts with dual port HBA each and Clustered StorageSpaces / SSD Tiering / Write Back Cache – all the cool stuff 😉

1 x Dual Controller JBOD with HDD & SDD mixed

PS: your comment about Zeus’ thunderbolt in your article “Demistifying Virtualizied Domain Controllers” made my day – thank you 😀

Hi David,

Thanks!

Unfortunately, I don’t have access to a system with DAS HBAs and I’m reluctant to write about anything I don’t have direct knowledge of. The next piece is going to be about making as many different configurations and connections as I can come up with, though.

Really a great series of articles! Would like to read about a small Hyper-V Storage “real-world-deployment” in your next part:

2 x Hyper-V Hosts with dual port HBA each and Clustered StorageSpaces / SSD Tiering / Write Back Cache – all the cool stuff 😉

1 x Dual Controller JBOD with HDD & SDD mixed

PS: your comment about Zeus’ thunderbolt in your article “Demistifying Virtualizied Domain Controllers” made my day – thank you 😀