Save to My DOJO

These two topics may not seem to have a lot in common, but it turns out that they live and die together. The host agent is a small service that System Center Virtual Manager installs on each managed host in order to control it. VMM’s services are pre-defined groupings of templates and guest settings that are intended to ease deployment and management. Agent failures can be frustrating under any circumstances, but they can have dire consequences for VMM’s services.

VMM Agent Upgrade Failures in a Cluster

I discovered this issue while updating VMM 2012 R2 to the latest update rollup. The server update worked fine, but, for whatever reason, the agent update on only one of the nodes didn’t work. I didn’t save the error and when I went back to retrieve it, VMM had wiped it. As I recall, it was neither informative nor useful, so you’re probably not missing out by not having it. During the failed update, it managed to break the existing agent, so the host was no longer manageable in VMM.

There isn’t much published about repairing broken VMM agents. All attempts to redeploy, including uninstalling the existing agent, didn’t help. Each attempt to reinstall resulted in:

Error (10429)

An older version of the Virtual Machine Manager management server is installed on SYSTEM.DOMAIN.TLD. The VMM agent on the VMM management server cannot be upgraded independently.

Recommended Action

Remove SYSTEM.DOMAIN.TLD from the Managed Computers view of the VMM console, uninstall the VMM management server from SYSTEM.DOMAIN.TLD, and then add SYSTEM.DOMAIN.TLD back as a host or library server.

For stand-alone Hyper-V hosts, that probably works fine. For a clustered machine, it doesn’t. What I had at this point was a host node with no installed agent and a non-functional host entry in the VMM console. There are no GUI options to remove it from the VMM console and the PowerShell cmdlet didn’t work. There is a VMM option to remove the node from the cluster entirely. The handful of hits I found while searching for an answer indicated that this might be the only option. Because this node is part of a production environment, that option was completely unacceptable.

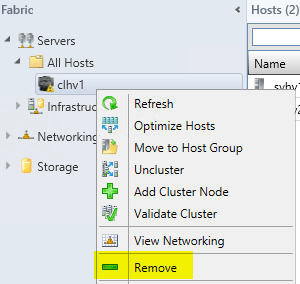

Turns out that the solution was a lot simpler. Sort of. If you’re having the same trouble I did, make sure to read all the way through before you do anything. You have to remove the cluster from the VMM console. It’s an option on the right-click menu:

This operation will remove the VMM agent from the nodes it can reach and remove all entries for all nodes and the cluster from the console. Then, you can try to deploy the agents to cluster anew. In my case, all the agents deployed without any further problems.

This operation will remove the VMM agent from the nodes it can reach and remove all entries for all nodes and the cluster from the console. Then, you can try to deploy the agents to cluster anew. In my case, all the agents deployed without any further problems.

This is not to say that there weren’t casualties.

VMM Services

VMM services are a potentially powerful feature that need some more time to bake. One nice thing would be if the VMM team would choose another option from the hundreds of thousands of words in the English language to describe them. Talking to other Windows admins without VMM experience or performing Internet searches almost inevitably leads to confusion against the traditional Windows service.

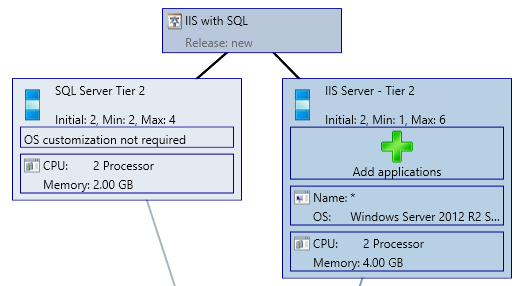

In VMM, you can use one or more VM templates to define a “service”. You can, for instance, use a VM template that has IIS installed and one with SQL server to design a complete web application deployment. This could have a variable number of each VM type to make a redundant configuration. Here’s an example:

Once one of these “services” is designed, you can then deploy it as much as you want. Deployment is quick and once it’s done, the entire unit can be managed as one. You can add additional members to the defined groups.

Once one of these “services” is designed, you can then deploy it as much as you want. Deployment is quick and once it’s done, the entire unit can be managed as one. You can add additional members to the defined groups.

Service Limitations

The worst issue with these services is that they are highly inflexible. If I later decide that the above setup needs a third tier, there’s pretty much nothing I can do. You can’t delete the service either, or all of the managed VMs in it are also deleted, instantly. Even if they’re powered up, they’re instantly stopped and destroyed.

This was a problem in our deployment, as we did want to add a new tier to our service. It was in production, and we didn’t see any way to fix it. Turns out that one of our problems became our solution.

Impact of VMM Host Agents on VMM Services

When I removed the Hyper-V cluster from the VMM console and then re-added it, the services were still present, and the VMs that were part of the service were still present, but they were completely disconnected from each other. Any attempt to work with the service resulted in this message:

Error (21990)

The current number of virtual machine instances in the service tier SERVICETIERNAME is not within the valid range between the InstanceMinimumCount and the InstanceMaximumCount.

Recommended Action

Ensure that the number of virtual machine instances is within the valid range for the service tier.

I couldn’t find any way to re-associate the VMs with the service. Internet searches turned up hits related to the actual VMM service (as in, the Windows service named “System Center Virtual Machine Manager Agent”). This may be a repairable problem, but I found no answer. In our case, it allowed us to eliminate the restrictive service while leaving the VMs themselves intact. All we had to was delete the service in the error state. Unfortunately, it looks like if you actually want to keep your service, any damage to the highly fragile VMM agent will require a call to Microsoft support and will likely involve manual manipulation of the VMM database.

Not a DOJO Member yet?

Join thousands of other IT pros and receive a weekly roundup email with the latest content & updates!