Save to My DOJO

One of the great unsung heroes of virtualization is the thin-provisioned virtual disk, known in Hyper-V terms as a Dynamically Expanding VHD. To say that it doesn’t receive its proper due is an understatement. It is more accurate to say that it is unjustly vilified and unfairly cast aside as useless technology. This post will defend this valuable tool.

Terminology Clarification

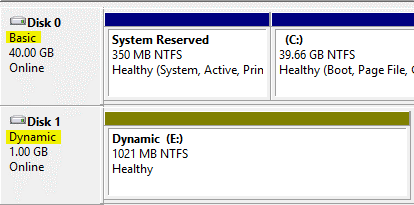

The proper label for this technology is “dynamically expanding”. Usually, this is shortened to just “dynamic VHD” or “dynamic disk”. As a descriptivist, I see nothing wrong with using this term as long as you and your audience understand what you mean. However, the term “dynamic disk” also refers to a logical volume type in Windows operating systems, as opposed to the basic disk. These dynamic disks are normally used for Windows-controlled software RAID and by special applications such as Microsoft Data Protection Manager. The only relevance this disk type has to Hyper-V is that host-initiated VSS backups cannot backup VHDs using the dynamic volume type without taking their owner offline.

The rest of this article will use the term “dynamic VHD” and variants of “dynamically expanding virtual disks” interchangeably. Also, no distinction will be made between VHD and VHDX unless it’s important.

What Dynamically Expanding Disks Are

The idea behind dynamically expanding disks is very simple to understand. As explained in an earlier article, the virtual disks that your virtual machines use are just files. When you create a new virtual disk, you assign it a size. The fixed disk type pre-allocates all that space as a VHD file of approximately the same size. The dynamic disk VHD is initially created as a very tiny file which grows as it is written to. They can be shrunk, but shrink operations must be scripted or performed manually. However, if a file is deleted inside a dynamic VHD, its blocks will be reused rather than further expanding the file. It cannot grow beyond its set maximum.

FUD-Busting

The first order of business is to answer the copious amounts of libel leveled against dynamic disks.

“They’ll Eat All Your Disk Space and Your VMs Will Die!” FUD

“The cat, having sat upon a hot stove lid, will not sit upon a hot stove lid again. But he won’t sit upon a cold stove lid, either.” – Mark Twain

This FUD is usually accompanied by a terrifying anecdote about a situation in which a physical storage location for VHDs filled up and all the VMs were paused. This is a risk you take with dynamic VHDs, but blaming the outcome on dynamic disks is inappropriate. The real problems were that the storage allocation was not properly planned and the storage location was not properly monitored. If you, or your organization, have weaknesses in either of these areas, then, by all means, used fixed VHDs.

“Performance with Dynamic Disks is TERRIBLE!” FUD

The performance differences are present, but trivial for most common loads. The real issue is that some individuals have a very unhealthy obsession with performance. If your primary driver is performance, then virtualization is not your solution to begin with. Read our V2P guidance and return to raw hardware. You’ll be happier. While you’re there, please talk to someone. Your friends and family are worried about you.

This FUD is usually reinforced by benchmarks that make a really big deal about the millisecond or two that separates fixed from dynamic. Benchmarks are useful for comparing two different hardware components or tracking performance variance across time. So, if you want to know if the latest AMD chip can process data as quickly as the latest Intel chip, benchmarks are appropriate. If you want to know if fixed disks are faster than dynamic disks, then benchmarks are appropriate. If you want to find evidence that your hardware doesn’t run as well as it did when you first bought it and you have benchmark results from earlier, benchmarks are appropriate. Proclaiming that because product A’s benchmark is superior to product B’s that product B is absolutely useless, shouldn’t be used, or anything similar is appropriate only for unscrupulous salesmen/marketing types and blind-worship fanboys. Benchmarks rarely, if ever, give you more than a very general idea of what will happen in production. The benchmark differences between fixed and dynamic do not translate well into generalized production metrics.

“Dynamic Disks Cause Fragmentation and will Make your VMs Useless!” FUD

The benchmarks that were the be-all-end-all of the previous FUD tend to disappear pretty quickly when this FUD is trotted out. That’s because the results of the benchmarks don’t come anywhere near supporting the premise of the FUD. I won’t go so far as to say that fragmentation is a non-issue, but it’s certainly one of the most overblown issues in a hypervisor setting. With multiple virtual machines accessing the same storage location, disk access is naturally scattered regardless of fragmentation. With modern multi-spindle systems, the effects of fragmentation are typically minimized anyway. Proponents of this FUD will have a horror story at hand to back it up, usually involving a high-I/O load system that truly isn’t appropriate for a dynamic VHD. However, if I have one anecdote and I add another anecdote, what I have is anecdotes, not data.

Something to remember about fragmentation is that drive access on a de-duplicated volume works in a very similar pattern to a fragmented drive, and in many situations is worse. With support for active VHDs in de-duplicated storage locations coming in 2012 R2, keep in mind that it will be inconsistent for someone to claim that fragmentation is a major concern while de-duplication is not.

More on fragmentation at the end of this post.

“So-and-so Says to Never Use Dynamic Disks and S/He’s an Expert” FUD

Experts are human and humans are fallible. Besides, I’d want the complete context of such a statement before blindly accepting it as a totalitarian order anyway. For some loads, only fixed VHD will do. For some message delivery methods, an expert may not have the time or space to properly explain dynamic VHDs, so taking the safe route of recommending fixed disks may seem like the most appropriate thing to do. Some audiences don’t have the foundational knowledge or ability to grasp the risks of dynamic VHD, so again, recommending fixed disks may be the safest route. No matter who is speaking or what the subject matter is, advice that includes the terms “always” or “never” should automatically be suspect.

How Dynamic VHDs Operate in the Real World

When you put away the anecdotes and the benchmarks and all the other toys of the FUDdy-duddies and look at a real deployment of dynamic VHDs, what you’ll see is they hold up very well when used sensibly. You can make some predictions on size and you must watch drive space usage. The various bits of any given dynamic VHD are generally distributed in a fashion that doesn’t cause meaningful fragmentation. Consumers of the applications and services on dynamic VHDs don’t see any delays or issues.

Using Dynamic VHDs Sensibly

“Cookies are a sometimes food.” – Cookie Monster

You probably don’t want to use dynamic VHDs all the time. Applications and services that perform heavy disk I/O are not appropriate. Some vendors will publish best practices recommendations that indicate what they prefer. As a general rule, databases are poor candidates for dynamic disks. Applications that use large amounts of disk space for scratch storage (also usually databases) are better suited for fixed disks. In my own production environments, I have placed databases on dynamic disks, but they were very low-load systems that had small amounts of data and only a few users. I did have two medium-load production databases that inadvertently went live on dynamic disks. They were later converted to fixed, and no performance difference was detectable by the end users (I was very sad, as I was expecting a parade).

Two places that are appropriate for dynamic VHDs are those that contain operating systems and those that will contain randomly accessed but slow-growth data, such as general-purpose file servers.

Operating System VHDs

Arguably, the best purpose of a dynamic VHD is virtual disks that hold only operating systems. A prime example is Windows Server 2012 itself. Once in production, it will rarely, if ever, use more than 22-24 GB (unless you have lots of RAM assigned and it needs a large page file). However, if you install it on a 24 GB VHD, you have two problems. The first is that VSS won’t have the 20% free space it wants and that can lead to problems. The other is that Microsoft won’t support Windows Server 2012 running on anything less than 32 GB. So, consider a system that holds 100 virtual machines with the “fixed only” philosophy. That’s 800 GB of completely dead space. Disk space is certainly getting cheaper, but any organization that considers 800 GB to be cheap enough to just throw away probably also has a lot more than just 100 virtual machines. A very basic driver of virtualization and thin-provisioning is to reduce this waste. Adopting an “always fixed, all the time” attitude defeats one of the major purposes of virtualizing in the first place.

File Server VHDs

File servers are another perfect application of dynamic VHDs. Access is more or less random anyway, so fragmentation is usually a non-issue. No one expects blinding performance out of a file server (at least, no one who has an ounce of reason), so any performance hits are automatically trivial. Also, it’s always been normal to create gigantic empty fields of storage space for file servers and then allow them to fill gradually. With even sloppy monitoring, this should be a controllable issue.

Making Fragmentation Go Away

If you’re genuinely concerned about fragmentation of dynamic VHDs, you might be happy to know that there’s a solution that doesn’t preclude their use. Unfortunately, due to a potential contractual issue I have with another project, I don’t think I can come right out and say it. It’s not really a secret, just maybe not something I can talk about in two places. I can get you started on figuring it out for yourself, though. First, ask an Exchange administrator about the technique s/he uses to fully defragment a mailbox database without blocking user access to their data. Second, ask yourself if you can leverage Storage Live Migration to achieve similar results.

Not a DOJO Member yet?

Join thousands of other IT pros and receive a weekly roundup email with the latest content & updates!

15 thoughts on "Proper Use of Hyper-V Dynamic Disks"

I don’t have any hard data, but my impression is that the Windows 2012 VHDX format will avoid the fragmentation issue. It uses a completely different internal structure.

Sorry, but have to disagree with all of this.

Dynamically expanding disks can eat up space and if you have to properly plan the use of them then you might as well not have used them to begin with.

Also VM’s are often provisioned by IT pro’s and passed on to the customer who may not fully understand the drawbacks of dynamically expanding.

Regarding performance, sure a VM may not offer the same performance as a physical machine but why hinder it with a dynamically expanding disk?

“… if you have to properly plan the use of them then you might as well not have used them to begin with.”

1. You should be properly planning for all disks. Fixed disks do not absolve you of basic responsibility.

2. If anyone filled up their fixed disks, your argument would be valid. You know that over 99% of all fixed disks in service are using 80% or less (usually a lot less) and will stay below that margin throughout their lifetimes. For every 100 GB of space you provision, you know that no more than 80 will be used. When you hit the 1 TB mark, that’s 200 GB of completely wasted space. You can do a lot with 200 GB.

3. Dynamic disks place the power of time on the side of the customer. They purchase 1 TB today and you allocate 2 TB on it and deploy a decent monitoring system. When storage utilization reaches a certain point, which you determine by data growth projections and basic trend analysis, the customer purchases additional space. By deferring the expansion, they are able to acquire more or faster storage for a lower total investment.

“Also VM’s are often provisioned by IT pro’s and passed on to the customer who may not fully understand the drawbacks of dynamically expanding.”

I said that. We encourage and welcome comments, but we ask that you read the material prior to commenting on it. Thank you for your understanding. That said, during my years as a field engineer, I preferred to educate the customer and leave them with the proper tools to monitor and manage their systems. I believe that such a customer-focused approach is superior to assuming that they are idiots and crippling useful portions of the technology that they paid me to install.

“Regarding performance, sure a VM may not offer the same performance as a physical machine but why hinder it with a dynamically expanding disk?”

Because end-users cannot detect a 1-2% performance difference, so you waste a lot of space and have nothing to show for it.

I have been keep on trying to read more and more articles and arguments about the “Proper Use of Hyper-V Dynamic Disks” for many years. And I did end up go with similar practices of production use you mentioned with Dynamic VHD ( or the mix&match of other type of VHD within one VM) starting Hyper-V 2.0. It is great knowing also recommended (or green light) for Server OS in production use too. I do agree mostly on this, however, would there be any concerns for server with AD role? Another question is VHD corruption (I haven’t seen one yet), wonder if any studies/real cases regarding the corruption rate on Fixed vs Dynamic? People reading this forum pretty much know the Pro and Cons between Dynamic vs Fixed, as Eric mentioned, the focus here is to provide a better understanding of Dynamic VHD and how to properly use them for your needs. In fact, as IT, we all are interesting in knowing how to safely and efficiently make use of Dynamic VHD the best we can. And we should also agree that storage planning mistake is no a Con for using Dynamic VHD.

This post is helpful for me.

Thanks Eric.

No, there is no special concern for using dynamic VHD with Active Directory. The only reports of corruption I’ve seen had to do with the new VHDX format and incorrect disabling of the system file cache. These problems should have been corrected in patches.

Hi, so the dynamic disk is not recommended in virtualization ? (i mean the dynamic logical disk in virtual machine)

The dynamic disk volume type is never recommended in physical or virtual machines unless you have a definite use for it. As in, you’re going to install Microsoft Data Protection Manager.

Thanks again Eric.

I’ve been using dynamic expanding disks for exchange and sql for over 2 years without any issues. I just start reading more about the differences and deciding if I should convert it to fixed disk on my 2012 servers. I’m not sure how much improvement in performance I’ll get by converting.

Great article. We are getting so tired of hearing so-called experts and vendors that we have to convert to fixed VHDX’s (VHD). And some are bold enough to state that they will not support you because of use of dynamic VHDX’s (a competitor backup vendor). We have always used dynamic for over 6 years except on a few VM’s (mixed as the OS disk is always dynamic) and never have had issues. It is refreshing to see an article to call out the FUD.

Great article! I almost exclusively use dynamically expanding vhds because of all the benefits you describe here. Thanks!

Because of the overwhelming bias and opinion spread as fact by “IT Professionals”, i have been getting crap for years for using Dynamic disks. You said it best “Because end-users cannot detect a 1-2% performance difference..”.

All environments are different, but in most cases, that 1-2% difference in performance won’t make a bit of difference. If your environment needs that extra performance, like the OP said, virtualization is not for you.

I think that, as Eric says, on the first FUD – I do see the psychology against the dynamically expanding disks. It’s sorta like advocating to spend from checking account, monitoring your balances versus living on credit card, monitoring the sum of your credit limits. There’s something inherently yucky about the latter.

So. If you can be disciplined, credit cards are awesome. If you can’t, it’s a hell.

Technology wise, especially in 2019, I have absolutely nothing against dynamically expanding – and I am a SQL guy, nuts about that last 0.5 percent of performance. I’m here just explaining the psychology of it.