Memory is the most precious resource in the typical virtualization host. It cannot be shared among virtual machines. The computing costs to compress or de-duplicate it are so prohibitive that neither is feasible.

Virtual machine density relies heavily on the amount of installed physical memory, but the financial cost of memory escalates quickly with higher chip capacities. Proper memory planning is absolutely essential to a successful Hyper-V deployment. In this article I’ll explain the concepts of memory in a Hyper-V environment to give you the necessary background to make those plans.

Hyper-V’s Memory Operations and Requirements

A hypervisor’s primary purpose is to control access to hardware, and that includes memory. It walls off the memory assigned to each virtual machine in such a way that the virtual machine “sees” its memory in the same way a physical machine views its memory. It is completely unable to access anything other than what Hyper-V assigns to it.

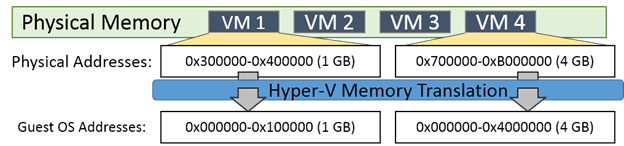

The technique that Hyper-V uses to achieve this is address translation. As you may recall from the introductory article on Hyper-V, the Hyper-V server role benefits greatly from hardware-based translation, called Second Level Address Translation, although it can perform it in software if necessary. 2012 R2 is the last server version that will include this software capability; Client Hyper-V has never had it. The following diagram provides a conceptual illustration of address translation:

The guest operating system of each virtual machine believes that its memory address space starts at 0 and counts upward to encompass all available memory. When it passes requests for memory access through its CPU, the request is translated into the physical memory address behind the scenes.

Hyper-V’s memory assignment and tracking activity is not without its own costs. Hyper-V itself needs about 300 megabytes of memory for its own process. For each virtual machine, any memory amount up to the first megabyte requires 32 megabytes of overhead. Every gigabyte past the first incurs another 8 megabytes of overhead. All of this is in addition to the 512 megabytes that the management operating system requires (it will often use less, but it cannot be pre-estimated with any level of accuracy).

This means that the absolute minimum amount of memory that you should expect your physical host to be unable to utilize for virtual machines is 1 GB. In practice, it is commonly observed that other processes in the management operating system require even more memory to be set aside. For a typical Hyper-V host, expect about 2 GB to be necessary just to run Hyper-V and the management operating system. For a high memory host that will serve a high number of virtual machines, it is wise to plan for as much as 4 GB for the system and overhead.

Hyper-V’s management operating system uses a page file just like any other installation of Windows. However, its automatic process is likely to result in an inappropriate size. The next section discusses this issue.

Hyper-V and the Page File

In earlier versions of Hyper-V, the management operating system’s page file was automatically set by Windows to be at or above the same amount as system memory. Since a virtualization host typically has a great deal of memory, this was causing automatic creation of page files that were dozens or even hundreds of gigabytes in size. This is completely unnecessary for a Hyper-V host.

Unlike some other hypervisors, Hyper-V does not provide memory paging operations for its guests except under very rare, specific conditions. In those situations, it does not use the management operating system’s page file. Therefore, creating a large page file is wasteful as most memory contents on a Hyper-V system will never be paged to disk in any way that the management operating system or hypervisor will be involved with.

The default page file allocation system in 2012 and later is superior to earlier versions, but still often results in very large page file sizes. If the default allocation is not causing disk space starvation on your host, the recommendation is to leave it set to System Managed. Even though it’s not the most efficient use of space, it does allow the management operating system to respond to extenuating circumstances with less administrative intervention. Such situations are rare, so if reclaiming the space is of meaningful benefit, then do so.

Virtual Machine Memory

The bulk of the memory in your hosts will be assigned to the virtual machines. The bulk of a Hyper-V administrator’s resource management time is often focused on memory balancing. The best approach is to develop a habit of providing the minimal acceptable level of memory to each virtual machine and only increasing it after need has been demonstrated.

The simplest distribution method is to use static memory (sometimes called fixed memory). This ensures maximum compatibility at the immediate expense of virtual machine density. It can also affect virtual machine mobility. In order to move a virtual machine from one host to another, the destination must have available capacity for that virtual machine; the less memory assigned to that virtual machine, the more likely it is to fit. Static memory is required for a number of server applications, such as Microsoft Exchange Server, as they allocate memory on the basis of what is available.

A more complicated method that leads to higher densities is Dynamic Memory. This technology operates by interacting with the memory manager inside guest operating systems to gauge what amount of memory the services and applications are requesting. If the requested amount exceeds the assigned amount, Dynamic Memory can assign more to that virtual machine (up to 1 terabyte or a lower administrator-defined limit). If other virtual machines increase their demand such that the total exceeds what is physically available, Dynamic Memory can determine if any guests have a lower demand than what is assigned to them and use a balloon driver to reclaim the excess for redistribution.

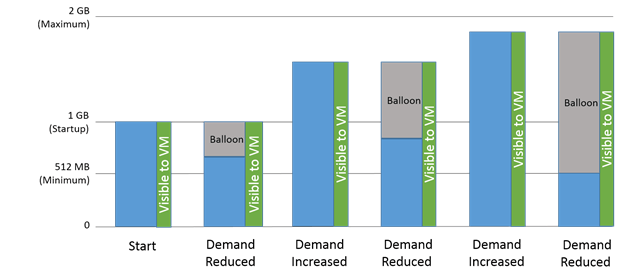

The following image illustrates a conceptual usage of Dynamic Memory for a single virtual machine:

The amount of memory that the virtual machine believes it has starts at the Startup level. It can increase, but it can never decrease. Instead, the hypervisor calls on the balloon driver to expand its memory request to the guest’s memory manager. The guest believes that it has some control over the memory consumed by the balloon driver but that a local process is using it; in truth, it is controlled by the hypervisor; it might be assigned to a virtual machine or left in the host’s pool. What the diagram doesn’t show is that the balloon driver is only activated when there is memory contention. If a virtual machine’s demand is reduced and no other virtual machine requires an increase, Dynamic Memory does not activate.

There are several things about Dynamic Memory to take note of:

- Processes that allocate memory based on what is available do not trigger a demand increase. Most applications and services do not allocate memory in that fashion.

- If a vendor indicates that a certain memory amount should be reserved for the virtual machine, that request can be satisfied using the Minimum setting.

- The minimum can be lowered while the virtual machine is on. The maximum can be raised while the virtual machine is on. The virtual machine must be off (not in Saved State) to make other changes.

- The actual amount of memory assigned to all virtual machines cannot exceed the host’s available memory. A running virtual machine’s demand will go unfulfilled, a stopped or saved virtual machine will not turn on, and an inbound virtual machine migration will be refused if there is not enough memory to accommodate it.

- To turn a virtual machine on, the amount specified for its Startup value must be available. To resume a virtual machine from a Saved state, the amount of memory it was using at that time must be available.

- A virtual machine using Dynamic Memory is not made aware of NUMA topology, although Hyper-V will still attempt to keep its processes and memory in the same NUMA node.

We’ve covered Dynamic Memory across several posts on our blog. You can find them here if you’d like to dive deeper into the subject:

- Hyper-V Dynamic Memory: An Illustrated Guide

- 10 Best Practices and Strategies for Hyper-V Dynamic Memory

- How to get your Hyper-V Dynamic Memory Configuration Right

- Deep Strategies for Hyper-V Dynamic Memory

NUMA in Hyper-V

NUMA (non-uniform memory access) is a technology used to maintain maximum performance in multi-processor environments. If your system has only a single physical processor, NUMA is not a concern. In multi-processor systems, only a very few applications are sensitive enough to memory performance issues that NUMA is something to worry about.

The contrast to NUMA is the older SMP design (symmetric multi-processing). In much older systems, the memory controller was a component that resided on the motherboard. Its purpose is to broker all connections to memory and to keep the contents of memory in a consistent state. When a CPU needed to access memory, a request would be sent to the memory controller. In multi-processor systems, all requests would be handled through the same controller. It would handle each request in a round-robin fashion which lead to an even distribution of process memory across available memory banks, hence the “symmetric” nomenclature.

Over time, memory controllers became integrated onto the CPU to address various performance and reliability issues. In modern multi-socket systems, physical memory banks are assigned to particular sockets. If that socket has a CPU, then those banks are handled by its memory controller. If a thread running on a different physical CPU needs to access memory, then it must go through the CPU that contains the owning memory controller. If the host is in SMP mode (called node interleaving on some systems), then threads will be evenly distributed across the memory banks by working through controllers on other CPUs.

If NUMA is enabled (which means that node interleaving is disabled on systems using that name), then the system tries to ensure that threads running on any given physical processor are assigned memory owned by that processor’s memory controller. This improves performance for applications that do not allocate memory amounts greater than the maximum size of a NUMA node. It should be reiterated that the performance difference is very small and very few services or applications are sensitive enough to the difference for it to be of concern.

Hyper-V does have the ability to project NUMA topology into virtual machines that are using fixed memory allocation. Use the memory settings in Hyper-V Manager or PowerShell to control it. The projected topology does not need to match that of the host. Do not change these settings unless you have a very clear understanding of NUMA and its effects. It is much easier to hinder performance than to improve it. As mentioned in the previous section, NUMA is not projected into guests configured for Dynamic Memory as guest NUMA cannot be changed on the fly.

Working with Memory

The easiest way to work with memory for a single Hyper-V guest is to use Hyper-V Manager as shown in the earlier article on Virtual Machine settings. The PowerShell cmdlet to modify memory for a virtual machine is Set-VMMemory. Its documentation can be found here. Host NUMA must be enabled in the system BIOS. Once enabled, there is nothing else to configure at the host level. You can view its status using PowerShell. The related cmdlets are Get-VMHostNumaNode and Get-VMHostNumaNodeStatus.

Now that you’ve gained an understanding of the challenges around memory and how Hyper-V handles it, you have the foundational knowledge to properly plan memory capacity for your deployment.