Save to My DOJO

Table of contents

- What is Node Fairness for Hyper-V?

- How Does Node Fairness Work?

- What are the Thresholds for Node Fairness?

- How is Node Fairness Triggered?

- What About Storage, Networking, and Power Loads?

- How Does Node Fairness Interact with Virtual Machine Manager’s Dynamic Optimization Feature?

- Are There Any Drawbacks to Node Fairness?

- How Do I Configure Node Fairness?

When we talk about sizing clusters of systems running Hyper-V virtual machines, we tend to do so from a holistic position. The advice that I typically give is to project the expected load and build a cluster that can operate that load on n – 1 nodes. We might occasionally talk about what happens if a Live Migration attempts to move a virtual machine to a node that doesn’t have sufficient capacity. Rarely do we say anything about how to keep guests in any sort of balance across the nodes of a cluster.

New in Failover Clustering with Windows/Hyper-V Server 2016 is “Node Fairness”, a technology that addresses this concern.

What is Node Fairness for Hyper-V?

Node Fairness is a feature of Failover Clustering (not Hyper-V) that will automatically Live Migrate guests away from an overloaded cluster node. Even though it is a Failover Clustering feature, it only operates on Hyper-V virtual machines.

How Does Node Fairness Work?

When triggered, the cluster service will check the host for the presence of two conditions:

- Has the physical host’s average CPU load (not what you see in Task Manager) exceeded the tolerance threshold in the past five minutes?

- Is the physical host’s current memory load above the tolerance threshold?

If either of the conditions are true, the host is a candidate for re-balancing. The heuristics for how it chooses which virtual machines to migrate is not published, and I was not able to determine any distinguishable pattern through my own testing. We may discover more as time goes on.

What are the Thresholds for Node Fairness?

There are three possible thresholds for node fairness. This is called the “Aggressiveness” of Node Fairness. I’ll number them by their values:

- Low: Either metric must be consumed to at least 80% of the node’s capacity

- Medium: Either metric must be consumed to at least 70% of the node’s capacity

- High: Either metric must be consumed to at least 60% of the node’s capacity

If the numbers seem reversed to you, rethink your position. Node Fairness is node-centric, so it looks at the node’s load levels, not the cluster’s. Moving guests at a lower node load level is considered to be a more aggressive action.

How is Node Fairness Triggered?

There are only two ways for Node Fairness’s re-balancing operations to be triggered:

- Every 30 minutes (the default)

- When a node joins the cluster. Applies either from a new node joining or an existing node rejoining, such as after a reboot.

I read a note on a TechNet blog article that says that Node Fairness can be triggered manually. If that’s true, they forgot to document it anywhere. I looked through Failover Cluster Manager and the FailoverClusters PowerShell module for any sign of any way to do that and came up empty. That article was from June of 2016, when 2016 was still in Tech Preview, so it’s entirely possible that they changed their minds on how much control to give you over your environment, or the author might have just been confusing Node Fairness with System Center Virtual Machine Manager’s (VMM) Dynamic Optimization.

What About Storage, Networking, and Power Loads?

Storage load is not considered for Live Migration. Instances would be rare in which a standard cluster Live Migration would solve storage balancing problems anyway. In most cases, a tiering response by the storage subsystem would be a better response. If you’re having trouble with VMs bogging down your storage system and upgrading storage isn’t an option, I’ll look at the new features for Storage Quality of Service in an upcoming article.

Network traffic and physical host power loads are also not factored into Node Fairness.

How Does Node Fairness Interact with Virtual Machine Manager’s Dynamic Optimization Feature?

When the VMM agent is installed on a 2016 Server running Hyper-V, it disables Node Fairness and replaces it with Dynamic Optimization. Dynamic Optimization does allow optimization to be run manually and on a variable schedule. VMM also allows you to opt out any virtual machines that you don’t want Dynamic Optimization to apply to.

In addition to memory and CPU metrics, Dynamic Optimization will consider storage and network I/O loads. In a sort of opposite to Node Fairness, Dynamic Optimization can also be configured to temporarily remove nodes from the cluster during low-load times so that overall power consumption is reduced. Overall, Dynamic Optimization is a much more powerful feature than Node Fairness, but it’s attached to the high price tag of VMM.

Are There Any Drawbacks to Node Fairness?

Most institutions will not have any reason to not use Node Fairness (or Dynamic Optimization if they have VMM). That’s not to say that it’s a perfect solution. There are its shortcomings when compared to VMM of course (mentioned above), but there a couple of other things to consider.

If you have any virtual machines that you’d prefer not to have automatically balanced, then your only choices are to disable Node Fairness, use Possible Owners to restrict it/them to a particular node, or shell out the money for VMM. Guests that are running applications that are not supported with Live Migration will be the deciding factor for most. Microsoft’s Skype for Business Server falls into this category. If it’s not obvious why virtual machines running applications of that kind would be clustered at all or even why they’d be virtualized, it’s because dealing with a physical host failure is simply a matter of changing the Possible Owners setting.

Another negative for Node Fairness is that it operates by periodic sampling, not as a reactive process. If a node’s usage spikes all the way up to 100% and stays there for a 20 minute period that doesn’t intersect one of the 30 minute sampling events, no re-balancing will be done. Even in the worst case, this isn’t a reason to not use Node Fairness; it’s just highlighting the fact that Node Fairness may not catch every opportunity to keep your cluster balanced, and there could easily be times when it allows an imbalance to continue long enough to make users upset. The primary mitigating factor for this weakness is that you’ll likely have the most problems with memory, which does not typically have short term high usage periods like that. If you are commonly experiencing high CPU loads that are being missed by Node Fairness, I’d recommend looking into doing some manual balance tuning with the Preferred Owners settings or adding nodes.

How Do I Configure Node Fairness?

Node Fairness is on by default for all Windows Server 2016 clusters, set to its least aggressive setting (minimum of 80% load on a node for a rebalance event), and will sample for load values at 30 minute intervals. If you’d like to modify the settings, it’s simple to do so.

Enabling, Disabling, or Changing Hyper-V Node Fairness’s Operating Mode with PowerShell

To change Node Fairness’s operating mode in PowerShell, set the cluster’s AutoBalancerMode to 0, 1, or 2, which have the following meanings:

- Node Fairness is disabled

- Node Fairness only runs when a node joins the cluster (newly-joined or rejoining)

- Node Fairness runs every 30 minutes

Once you have chosen a value, set it like this:

(Get-Cluster -Name clhv01).AutoBalancerMode = 0

If you’re running the cmdlet from a node that is a member of the cluster that you wish to modify, you can leave off the entire Name parameter.

Another way that allows for tab completion:

$Cluster = Get-Cluster clhv01 $Cluster.AutoBalancerMode = 0

Setting Hyper-V Node Fairness’s Balancing Mode with PowerShell

Changing Node Fairness’s balancing mode in PowerShell is very similar to changing its operating mode. There are three choices, repeated here for convenience:

- Low: CPU load in the past five minutes or the current memory load must be consumed to at least 80% of the node’s capacity

- Medium: CPU load in the past five minutes or the current memory load must be consumed to at least 70% of the node’s capacity

- High: CPU load in the past five minutes or the current memory load must be consumed to at least 60% of the node’s capacity

Once you have chosen a value, set it like this:

(Get-Cluster -Name clhv01).AutoBalancerLevel = 1

If you’re running the cmdlet from a node that is a member of the cluster that you wish to modify, you can leave off the entire Name parameter.

Another way that allows for tab completion:

$Cluster = Get-Cluster clhv01 $Cluster.AutoLevelerMode = 1

How to set up Hyper-V Node Fairness in Failover Cluster Manager

To use Failover Cluster Manager to set Hyper-V Node Fairness:

- In Failover Cluster Manager (Windows 10/Windows Server 2016 or later only), right-click on the cluster object in the left pane and click Properties.

Cluster Properties Menu

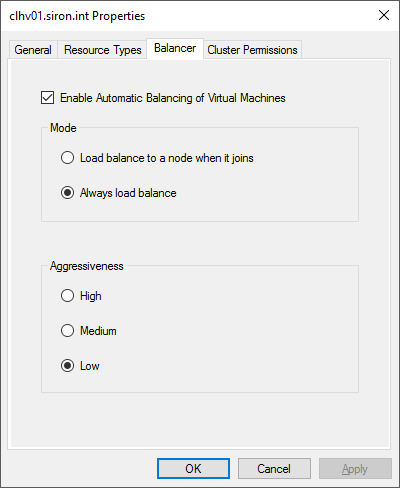

- In the properties window, switch to the Balancer tab.

Node Fairness Options

- Modify the options in accordance with your desires.

Because Node Fairness is a feature of Failover Clustering, Hyper-V Manager cannot be used to view or set Node Fairness.

Not a DOJO Member yet?

Join thousands of other IT pros and receive a weekly roundup email with the latest content & updates!

38 thoughts on "Node Fairness in Hyper-V 2016: How it works and how to set it up"

Great article!

Microsoft is methodically catching up with VMware in the virtualization arena.

Great article!

Microsoft is methodically catching up with VMware in the virtualization arena.

Thanks for the detailed article Eric. I love reading them

Not working in powershell. Windows 10 pro.

> $Cluster = Get-Cluster clhv01

Get-Cluster : The term ‘Get-Cluster’ is not recognized as the name of a cmdlet, function, script file, or operable

program. Check the spelling of the name, or if a path was included, verify that the path is correct and try again.

At line:1 char:12

$Cluster = Get-Cluster clhv01

~~~~~~~~~~~

CategoryInfo : ObjectNotFound: (Get-Cluster:String) [], CommandNotFoundException

FullyQualifiedErrorId : CommandNotFoundException

The FailoverClusters module is not enabled in Windows 10 by default.

dism /Online /Add-Capability /CapabilityName:Rsat.FailoverCluster.Management.Tools~~~~0.0.1.0Start a new PowerShell session.